Change is the New Craft

Why the Skill That Built the Present Won't Guarantee the Future

For the thirty years I have been in this game, the engine of differentiation has been knowing how to build solutions and services based on the advantages of new technology. The engineering leaders who rose to the top of technology organisations got there because they understood systems, architectures, languages, and frameworks better than anyone around them. Technical depth was the differentiator. Code was the craft. Knowing how to build was how you earned your seat at the table.

That era is ending, and the data from 2025 makes the case with sobering clarity: AI is not augmenting the ability to build; it is commoditising it. The capability that software engineers believed set them apart, the capacity to turn intent into working systems, is being absorbed by the technology itself, at a pace that outstrips any organisation’s ability to plan for it.

This does not mean engineering leadership is becoming less important. It means the nature of what makes an engineering leader valuable is shifting, from knowledge of how to build to knowledge of how to enable continuous change in an environment that moves faster than organisational structure can follow.

So, this is not an argument that code no longer matters, or that technical depth is irrelevant. It is an argument that technical depth alone is no longer sufficient to lead, because the constraint on enterprise value creation has moved from “can we build it?” to “can we change fast enough to know what to build with what’s next?” The evidence is now overwhelming.

1. The Technology Clock: Building Is Getting Faster Than Deciding

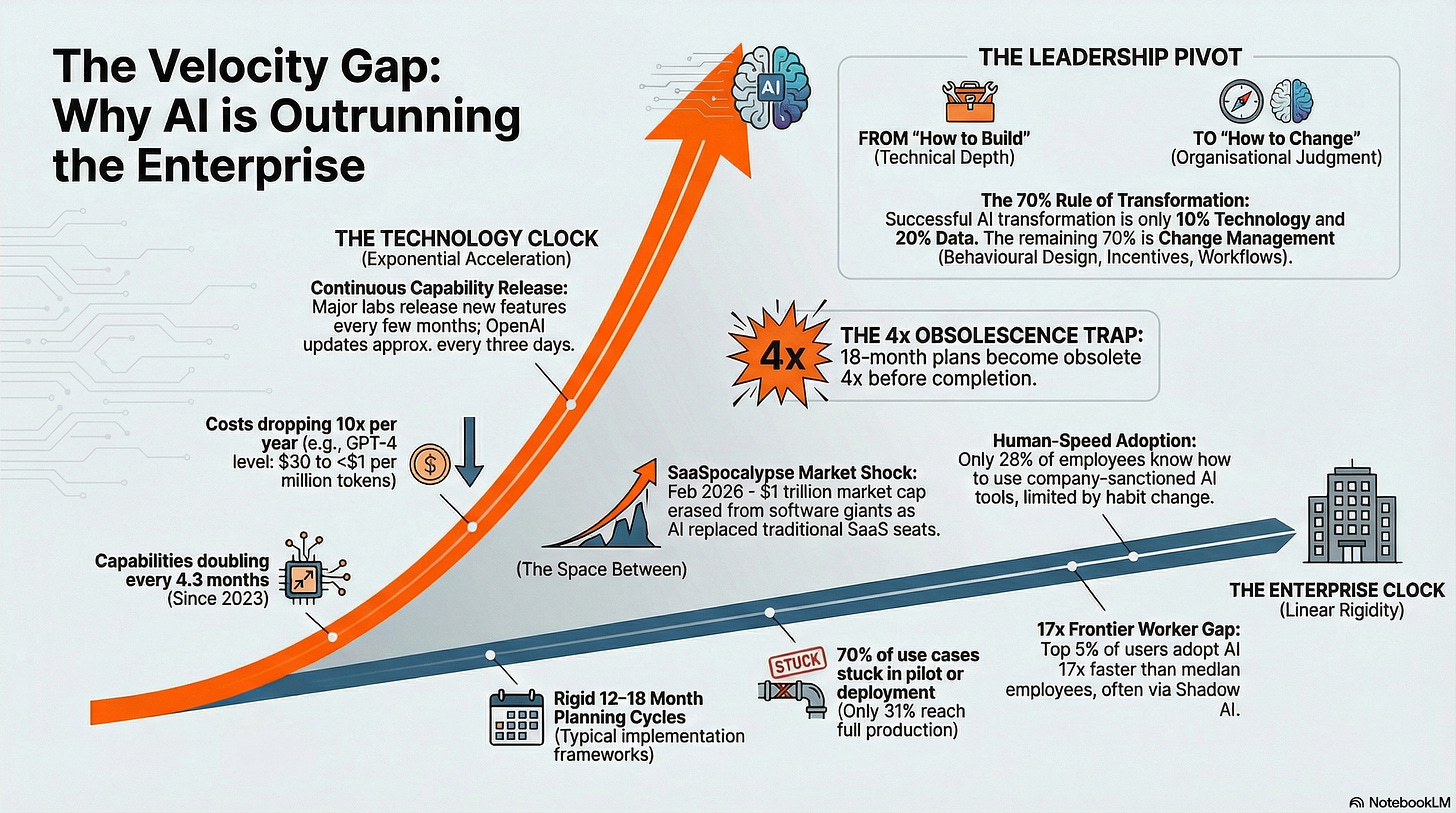

In March 2025, the AI evaluation organisation METR published research measuring the length of software engineering tasks that frontier AI models could complete autonomously. They found that this capability had been doubling approximately every seven months since 2019, on a remarkably consistent exponential curve. By January 2026, METR updated their model and found the trend had actually accelerated: the post-2023 doubling time had shortened to approximately 4.3 months, roughly 20% faster than the original estimate.

To make this concrete: when METR assessed Claude 3.7 Sonnet in early 2025, the model could reliably complete tasks that took human professionals a few minutes. By late 2025, Claude Opus 4.5 could complete tasks that took humans nearly three hours. The capability frontier did not inch forward; it leapt.

This is not confined to one lab’s models. 2025 saw GPT-5 launch in August with a context window expansion from 128,000 to 272,000 tokens, followed by GPT-5.1 in November. Google released Gemini 3.0. Anthropic shipped successive Claude 4 variants. xAI launched Grok 4 in July and Grok 4.1 in November. OpenAI released Codex-Max. As KDnuggets noted in their year-end review, new models are no longer rolling out annually or even biannually; they arrive every few months, and each release changes what is technically and economically feasible. A February 2026 analysis from Resultsense observed that OpenAI alone releases a new feature or capability roughly every three days.

The cost curve is equally dramatic. LLM Stats data from early 2026 shows that the cost of achieving GPT-4-level performance has fallen by roughly tenfold per year. Capabilities that cost $30 per million tokens in early 2023 now cost under $1. Any business case older than twelve months that includes cost assumptions about AI compute is not slightly wrong; it is wrong by an order of magnitude.

Menlo Ventures’ third annual State of Generative AI in the Enterprise report captured where this acceleration lands hardest: coding has become AI’s breakout use case. Enterprise spending on AI coding tools exploded from $550 million to $4 billion in 2025. Accel’s 2025 Globalscape report tracked the adoption curve in stark terms: the proportion of developers using AI coding assistants went from 36% in 2023 to 63% in 2024 to 90% in 2025. In top-quartile organisations, teams report 15% or greater velocity gains across the software development lifecycle. The act of building, the thing that defined the engineering profession, is being accelerated by a factor that compounds with every model release. Note that the productivity gains often don’t translate to cost reduction - enterprises bank the improvement and just ship more.

AlixPartners quantified the consequence precisely: while AI-accelerated coding tools deliver 20 to 30% productivity gains, the constraint has already shifted from engineering capacity to strategic planning.

The organisation cannot decide what to build as fast as AI can build it. The bottleneck is no longer the code; it is the clarity.

2. The Enterprise Clock: Organisations Still Change at Human Speed

Set against this, in most cases the enterprise planning and adoption cycle has not accelerated at all. Enterprise AI roadmap guides published in late 2025 and early 2026 continue to recommend 12 to 18 month timelines for moving a single use case from proof of concept to production deployment. A widely cited implementation framework puts first production deployment at 20 to 24 weeks, and that assumes dedicated resources and executive support; the optimistic case.

The ISG State of Enterprise AI Adoption Report 2025, drawing on 1,200 use cases, found that only 31% had reached full production. That figure was celebrated as a doubling from 2024, but it means that after more than two years of intense enterprise AI investment, seven out of ten use cases remain stuck somewhere between pilot and deployment. AI copilots, the highest-volume use case for IT departments, had only one-third in production.

Deloitte’s State of AI in the Enterprise 2026, surveying 3,235 leaders, found that while 66% of organisations reported productivity and efficiency gains, only 20% had achieved actual revenue growth from AI; 74% described revenue growth as an aspiration for the future. The gap between investment and realised value remains vast.

WalkMe’s State of Digital Adoption 2025 report quantified a specific bottleneck: only 28% of employees know how to use their company’s AI applications, despite enterprises running an average of 200 AI tools.

The constraint is not the technology; it is the organisation’s capacity to absorb it.

A December 2025 Bloomberg survey of 604 senior executives at companies with more than 5,000 employees found that 97% already have an AI implementation strategy in place. Every large enterprise has a plan. Almost none of them can execute it at the speed the technology demands, because execution requires people to change how they work, and people change at human speed, not at model-release speed. Gartner’s 2026 CIO and Technology Executive Survey confirmed the structural tension: 94% of CIOs expect major changes to their plans and outcomes within the next 24 months, yet only 48% of digital initiatives meet or exceed business targets.

3. The Gap: Why Code Knowledge No Longer Closes It

Here is where the two clocks collide. If your planning cycle takes 12 months and the technology’s capability frontier shifts every four months, the plan becomes obsolete at least three times before it completes. This is not a failure of planning quality; it is a mathematical impossibility. You cannot define a “future state” in a domain where the future state changes faster than the definition process.

And this is where the identity of engineering leadership collides with the new reality. For three decades, the engineering leader’s response to a technology shift has been to learn the technology: understand the architecture, master the tooling, build the proof of concept, and lead the team through the technical transition. The assumption was that technical knowledge was the scarce resource, and the leader who had it could guide the organisation forward.

But when the technology changes every quarter, technical mastery of any specific capability is a depreciating asset. The leader who spent six months becoming expert in a particular model’s capabilities, prompt engineering patterns, or agent architecture finds that expertise partially obsolete with the next release. The knowledge that built their authority has a shorter shelf life than the planning cycle that depends on it.

The evidence from 2025 confirms this was already happening. Technology Magazine reported in December 2025 that the year’s push to build comprehensive AI agents “hasn’t worked,” predicting a strategic reset in 2026 toward domain-specific, workflow-embedded AI rather than the all-encompassing platforms that multi-year strategies had specified. This is not a win. It is an admission of organisational leadership failure.

A January 2026 post-mortem from six enterprise AI leaders, published by AI Data Insider, identified the core failure pattern: organisations invested heavily in AI capability (models, infrastructure, tooling) while underinvesting in behaviour design (incentives, workflows, manager reinforcement). One contributor quantified the imbalance precisely: AI transformation is only 10% technology; 20% data and 70% change management, yet very few enterprises have industrialised delivery at scale. The technology component that consumed most of the planning, and most of the engineering leadership’s attention, was the part that changed fastest and mattered least.

4. The Inversion: What the Frontier Workers Reveal

OpenAI’s December 2025 State of Enterprise AI report, drawing on data from over one million business customers, revealed the consequences of this mismatch. Weekly messages in ChatGPT Enterprise increased roughly eightfold over the year. Usage of structured workflows increased nineteenfold. But the distribution was radically uneven: “frontier workers,” the top 5% by usage, sent seventeen times more coding messages than the median employee.

Consider what that 17x gap represents. It is not a gap in technical skill. The tools are accessible to everyone with an enterprise licence. It is a gap in willingness to change how work is done; a gap in the habits, assumptions, and daily practices that determine whether someone integrates a new capability into their workflow or leaves it untouched. The frontier workers are not better engineers; they are faster adapters.

Microsoft’s Work Trend Index had already identified this pattern in 2024: 75% of knowledge workers were using AI at work, but 78% were bringing their own AI tools rather than using company-sanctioned ones. By 2025, this “shadow AI” phenomenon had become, in the words of one enterprise governance analysis, undeniable. Employees moved faster than strategies could approve. The people creating value were not waiting for the roadmap; they were changing their own practice, continuously, without permission.

The Menlo Ventures data tells the same story from the spending side. Departmental AI spending hit $7.3 billion in 2025, up 4.1x year-over-year, with coding tools alone accounting for $4 billion. Enterprise generative AI spending surged from $1.7 billion in 2023 to $37 billion in 2025. An EY survey found that 88% of mid-to-large organisations now spend more than 5% of their IT budget on AI, with many targeting 25%.

5. The Build-vs-Buy Reversal: When Everyone Can Build, Everything Changes

Buried inside the Menlo Ventures data was a finding that most commentators initially read as good news for engineering teams: 76% of AI use cases are now purchased rather than built internally, a dramatic reversal from the near-even split of 2024. Enterprises tried to build their own AI in 2024; most of it failed. By 2025, they were buying.

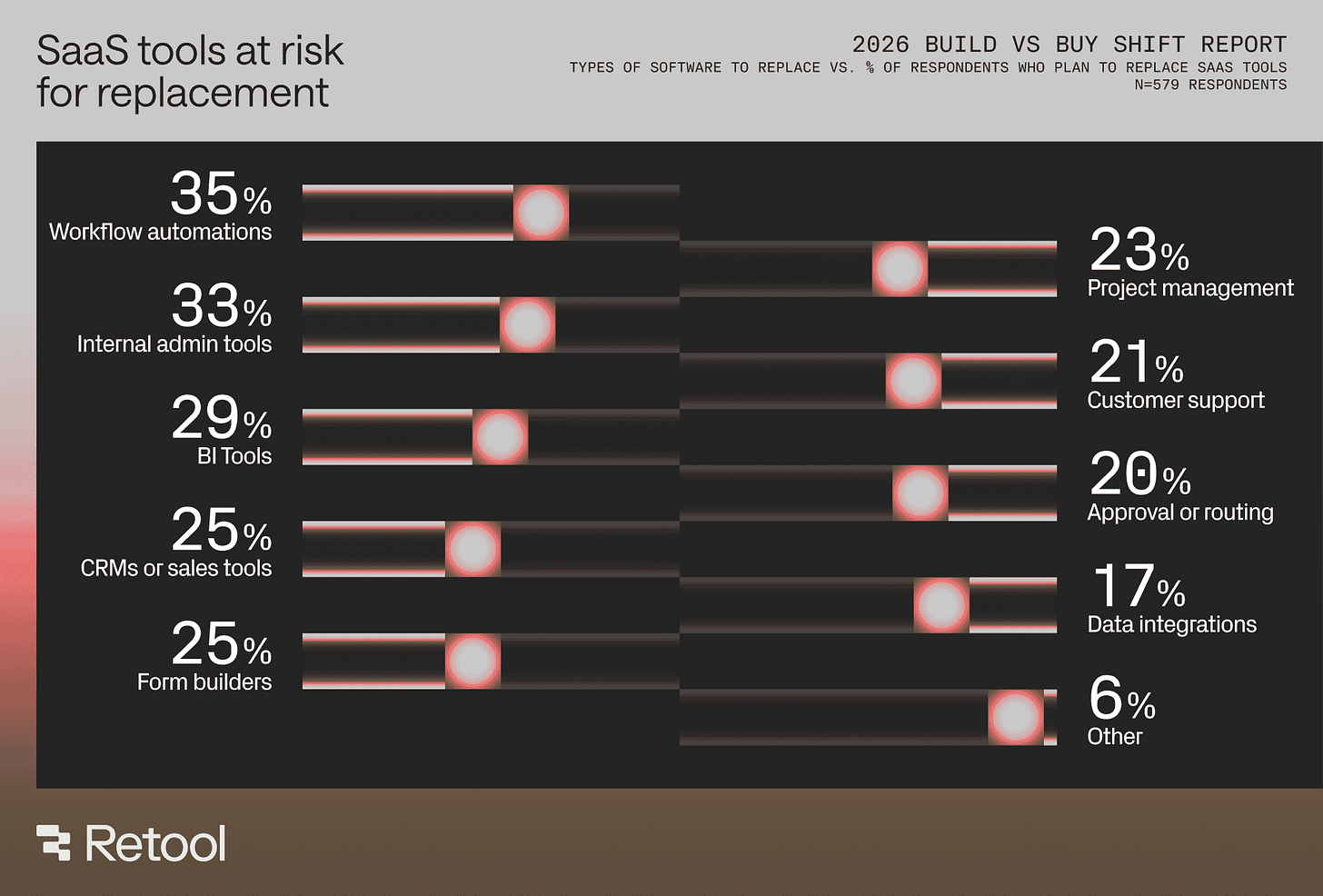

But by early 2026, the pendulum had already swung again, and this time in a direction that transforms the engineering leader’s role entirely. Retool’s 2026 Build vs. Buy report, surveying 817 builders across roles from engineering to marketing to finance, found that 35% of organisations have already replaced functionality from at least one SaaS tool with a custom build, and 78% expect to build more of their own tools in 2026. More striking still: 60% reported building something outside of IT oversight in the past year, and 51% of builders have already shipped production software using AI.

This is not the old “build vs. buy” calculus. The old version required months of engineering effort, dedicated teams, and substantial budgets; it was a decision that naturally centralised in engineering leadership. The new version happens in days, often by people who are not software engineers, using AI-assisted development tools that collapse the distance between intent and working system. Retool’s CEO put it directly to Newsweek: SaaS products force you to work their way; now that vibe coding has gone mainstream, businesses that can custom-build their value drivers will have a competitive edge.

The financial markets noticed. In early February 2026, what traders at Jefferies christened the “SaaSpocalypse” erased approximately $1 trillion in enterprise software market capitalisation. Salesforce fell nearly 29% year-to-date. Adobe lost 25%. ServiceNow, despite posting strong earnings, shed $115 billion in market value. The immediate catalyst was Anthropic’s launch of Claude Cowork, but Bain & Company’s analysis identified the structural cause: AI poses a fundamental threat to seat-based models, and vendors are already reporting slower growth in seat count as customer companies become more efficient.

The same week, IBM’s share price fell 13% in a single trading session, its steepest one-day decline in more than two decades, after Anthropic announced that Claude could materially streamline COBOL code modernisation. Nearly $30 billion in market capitalisation was erased in hours. The announcement struck at something deeper than one company’s revenue: COBOL systems still process trillions of dollars in daily financial transactions globally, and the scarcity of engineers who understood them had created a durable economic moat for decades. When an AI model credibly claimed it could ingest, interpret, and refactor those codebases in weeks rather than years, the market did not wait for validation.

Legacy modernisation is where these two forces converge. AI coding tools are making it feasible for enterprises to refactor systems that were previously untouchable: not just COBOL, but ageing Java monoliths, complex .NET estates, and decades of accumulated technical debt. Gartner projects that by 2028, 90% of enterprise software engineers will use AI code assistants across the software development lifecycle, including autonomous rewriting of legacy code. Constellation Research predicts that in 2026, enterprises will realise they need their own forward-deployed engineers to work through data, process, architecture, and AI automation; engineers who know the business and industry better than borrowed consultants ever could.

For the engineering leader, this convergence is disorienting. On one side, business users are building their own tools without asking permission, replacing SaaS subscriptions that the technology function selected and governed. On the other, AI is making it possible to modernise the legacy systems that have long been the engineering team’s exclusive domain.

The two things that anchored the engineering leader’s authority, control over what gets built and exclusive knowledge of what exists, are both being redistributed.

The leaders who navigate this successfully will not be the ones who try to reassert control. They will be the ones who recognise that when building is no longer the bottleneck, the scarce resource becomes something else entirely: the organisational judgment to know what is worth building, the governance to ensure it is built safely, and the capacity to help people absorb the change when yesterday’s system is replaced by tomorrow’s.

6. The New Skill: Enabling Change, Not Directing Builds

None of this means engineering leadership is becoming less important. It means the job is changing, and the change is profound.

The ISG report’s own recommendation was telling: enterprises should experiment rapidly, codify lessons through adoption, and harden them into scalable, compliant processes rather than pursuing multi-year data transformation programmes promising to “get our data right” before tackling AI. That is not a technology recommendation. It is an organisational design recommendation.

It requires leaders who understand how to create the conditions for continuous adaptation, not leaders who know the right answer and can specify it in advance.

The Sweep post-mortem on enterprise AI in 2025 identified the same shift from a different angle: the teams that succeeded were those who understood their systems well enough to let AI operate safely inside them. The constraint was not model capability; it was organisational legibility. Could you explain your own system clearly enough for an AI agent to work within it? That question is not about code; it is about how well the organisation understands its own practices, rules, and tacit assumptions.

The organisations pulling ahead are not the ones with the best technical architectures. They are the ones that have accepted that the roadmap is a living hypothesis, not a contract with the future. They treat every quarter’s plan as a probe into an environment that will have changed by the time results arrive. They invest in the organisation’s capacity to absorb continuous change rather than in the specification of a destination that will not exist when they arrive. This is, in a nutshell, the entire reason behind the Organisational Prompts series you are currently reading…

The engineering leader who thrives in this environment is not the one who knows the most about the current model’s capabilities. It is the one who can help a team of professionals navigate the disorientation of having their core skill automated, find new sources of value in judgment, specification, and domain understanding, and do this not once as a transformation event but continuously as a way of working. The skill that matters is not building; it is enabling others to keep changing how they build, faster than they have ever changed before.

Some enterprise AI strategies are failing not because they are badly written. They are failing because they target the wrong CRAFT entirely. Code was the differentiator for a generation. Change is the differentiator now.

What Engineering Leaders Should Do Next

If you lead an engineering or technology function, the most important question you can ask yourself today is not “what should we build with AI?” It is “how fast can my organisation absorb a change to how we build?”

Start by being honest about the answer. Look at your last three technology adoption initiatives, not the AI-specific ones, but any significant change to tooling, practice, or workflow. How long did each take from decision to embedded daily practice? Not to pilot, not to announcement, but to the point where the new way of working was the default and the old way had stopped. That duration is your organisation’s metabolic rate for change. If it is measured in years, you have a structural problem that no AI strategy can solve, because the technology will have changed multiple times before your people finish absorbing the last shift.

Then look at where the actual adoption energy is in your organisation. The OpenAI data shows a 17x gap between frontier and median workers. You have the same distribution. Find your frontier workers. They are not waiting for the strategy; they are already changing how they work. Your job is not to direct them; it is to learn from them, make their adaptations visible, and create the conditions for others to follow at their own pace without waiting for a plan that will be obsolete before it is approved.

Look at the Retool data: 60% of builders in their survey shipped something outside IT oversight last year. That is almost certainly happening in your organisation too. The instinct will be to reassert governance. Temper that, at least initially. First understand what they built and why. The fact that business teams are building their own tools is not a failure of governance; it is a signal that the organisation’s need for adaptation is outrunning the engineering function’s capacity to respond. If you close that gap by slowing down the business, you have solved the wrong problem.

Finally, redefine what your leadership team is for. If your engineering leadership meetings are consumed by architectural decisions, tooling evaluations, and capability assessments, you are optimising the part of the problem that AI is solving for you. The scarce resource is no longer technical judgment about how to build. It is organisational judgment about how to help people continuously change what they do, what they value, and how they see their own expertise, in a world where the technology beneath them shifts every few months.

The leaders who will matter most in the next five years are not the ones who understand the technology best. They are the ones who understand how to help an organisation keep changing when the change never stops.

Sources

ISG, State of Enterprise AI Adoption Report 2025 (September 2025)

OpenAI, The State of Enterprise AI (December 2025)

Deloitte, State of AI in the Enterprise 2026 (2025 survey of 3,235 leaders)

Menlo Ventures, 2025: The State of Generative AI in the Enterprise (December 2025)

METR, Measuring AI Ability to Complete Long Tasks (March 2025; updated January 2026 as Time Horizon 1.1)

Epoch AI, METR Time Horizons (benchmark tracker for METR task completion data)

WalkMe, State of Digital Adoption (SODA) 2025 (2025)

Accel, 2025 Globalscape: Race for Compute (November 2025)

Retool, The Build vs. Buy Shift: How Vibe Coding and Shadow IT Have Reshaped Enterprise Software (February 2026; survey of 817 builders)

Newsweek, Enterprises Are Replacing SaaS Faster Than You Think (February 2026)

CNBC, AI Fears Pummel Software Stocks (February 2026)

Bain & Company, Why SaaS Stocks Have Dropped (February 2026)

The Register, Rise of AI Means Companies Could Pass on SaaS (February 2026)

IBTimes India, When AI Learns Legacy: How Code That Rewrites Itself Is Changing IT Economics (February 2026)

Devox Software, The 2026 Legacy Modernization Report (December 2025; references Gartner and McKinsey data)

Constellation Research, Enterprise Technology 2026: 15 AI, SaaS, Data, Business Trends to Watch (January 2026)

Gartner, The CIO Agenda 2026 (November 2025; survey of 3,100 CIOs)

Resultsense, OpenAI Enterprise AI 2025: State of Adoption (February 2026)

Technology Magazine, Why 2026 Will Mark a Reset for Enterprise AI Strategy (December 2025)

EM360 Tech, Five AI Shifts Shaping Enterprise Strategy in 2026 (January 2026)

AI Data Insider, Six AI Industry Leaders on What Went Wrong in 2025 (January 2026)

ERP Today, Enterprise Software Faces AI-Driven Disruption (January 2026)

MILL5, Disruption Isn’t Coming: What Enterprise Leaders Said About AI in 2025 (February 2026; references Bloomberg survey of 604 executives)

LLM Stats, AI Trends 2026 (February 2026)

KDnuggets, The 10 AI Developments That Defined 2025 (January 2026)

Understanding AI, 17 Predictions for AI in 2026 (December 2025)

Sweep, Why Enterprise AI Stalled in 2025: A Post-Mortem (December 2025)

Disclaimer

I write about the industry and its approach in general. None of the opinions or examples in my articles necessarily relate to present or past employers. I draw on conversations with many practitioners and all views are my own.