Christensen: How 'Good' Decisions Can Destroy Transformation

Why Clayton Christensen’s Theory Explains How Organisations Rationally Destroy Their Own Capacity for Transformation, and What This Means for AI Adoption

Clayton Christensen asks you to consider the possibility that your organisation is failing because its leaders are competent.

Not despite their competence. Because of it. The better they are at listening to customers, investing in higher-margin opportunities, and improving existing products, the more reliably they will miss the thing that eventually replaces them. This is not a metaphor. Christensen documented it happening, with mechanical precision, across disk drives, steel, retail, and education. The pattern is always the same: the incumbent does everything right and loses anyway.

Most management theory assumes that failure comes from bad decisions. Christensen’s contribution is the demonstration that failure can come from good ones. That is a far more uncomfortable idea, and it is the one that matters for AI transformation. Because if failure only came from bad decisions, you could fix it with better analysis, better leadership, or better governance. But if failure comes from the structure of rational decision-making itself, from the processes and values that define what the organisation considers worth doing, then the fix is not better management. It is different management, operating in a different structure, with different criteria for success. And that, as we shall see, is the one thing the existing organisation is least equipped to create.

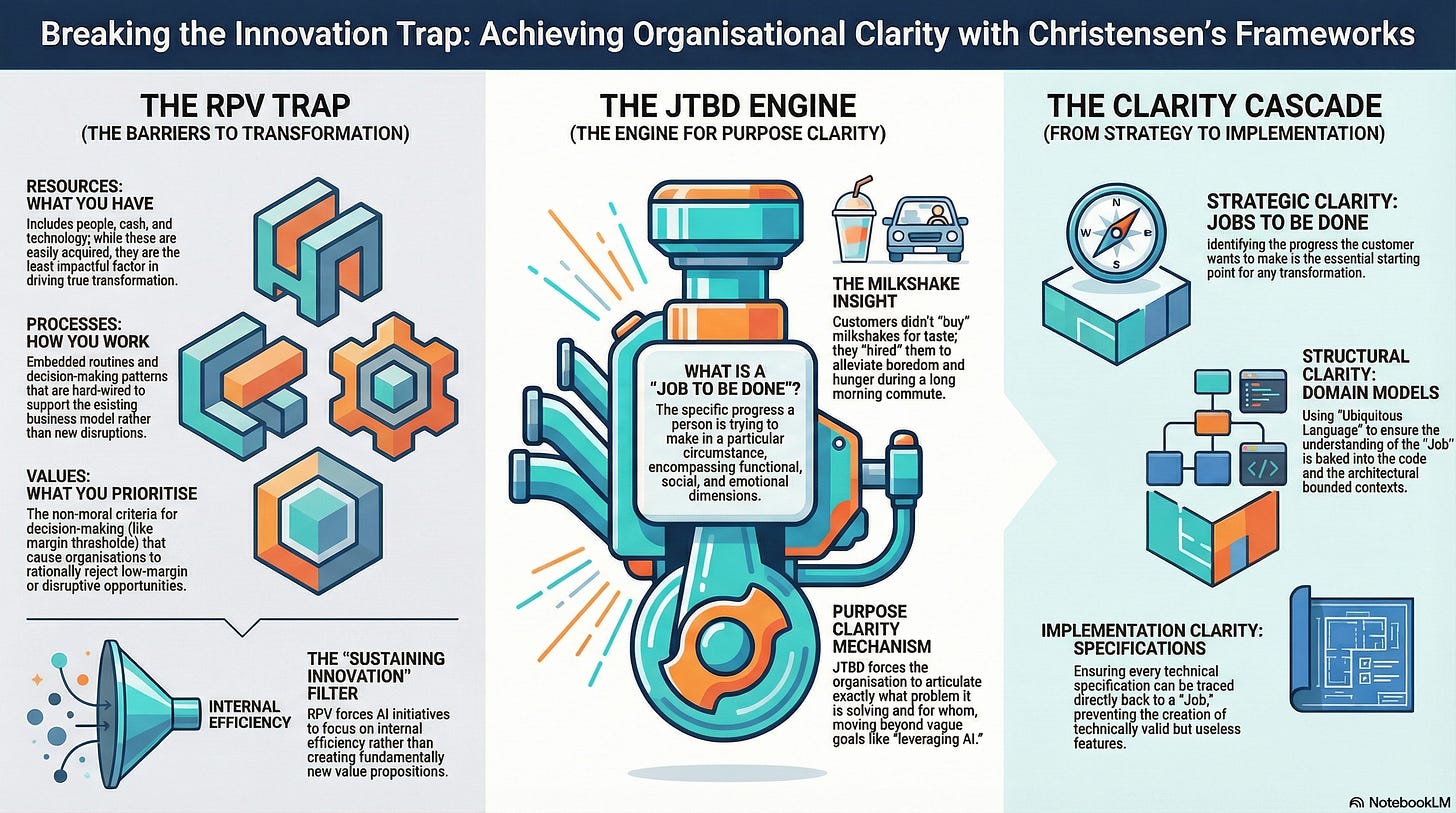

A note on scope. Christensen (1952-2020) published extensively across innovation, strategy, education, healthcare, and personal philosophy. The Innovator’s Dilemma (1997) remains the foundational work. The Innovator’s Solution (2003), co-authored with Michael Raynor, extended the theory into prescriptive territory. Competing Against Luck (2016), co-authored with Taddy Hall, Karen Dillon, and David Duncan, developed the Jobs to Be Done framework. This article focuses on three interlocking ideas: the disruption mechanism, the RPV (Resources, Processes, Values) framework for organisational capability, and Jobs to Be Done as a tool for purpose clarity. It does not attempt a comprehensive survey.

I was lucky enough to be taught by Clay at a Harvard Business School course on Innovation in the early 2000’s. It remains the best single teaching experience I have ever had and I hope I manage to communicate some of his insight to justify his time spent teaching me.

1. The Disruption Mechanism: Why Good Management Causes Failure

Christensen’s central insight is counterintuitive and, once understood, profoundly uncomfortable. Disruptive innovation is not about better technology defeating worse technology. It is about a specific process by which new entrants, typically with fewer resources and initially inferior products, displace established firms that are doing everything right by conventional management standards.

The mechanism operates through a sequence that Christensen documented across industries from disk drives to steel to retail. A new entrant introduces a product that is simpler, cheaper, and often worse on the performance dimensions that mainstream customers value. Because the product is worse on those dimensions, the incumbent rationally ignores it. The new entrant’s product appeals to customers at the low end of the market, or to customers who were previously non-consumers. The incumbent, listening to its best customers and pursuing higher margins, moves upmarket. Meanwhile, the new entrant improves its product along a sustaining trajectory until it meets the needs of the mainstream market. By the time the incumbent recognises the threat, its cost structure, processes, and organisational identity make an adequate response nearly impossible.

The critical word is rationally. The incumbent is not asleep. It is making the decisions that every MBA programme, every board, every management framework would endorse: listen to your customers, invest in higher-margin opportunities, improve your existing products. Christensen’s contribution is the demonstration that this rationality, applied consistently, produces its own destruction.

Weber diagnosed the same mechanism at a deeper level. His means-ends rationality; given this goal, “what is the most efficient way to achieve it?” is precisely the logic that drives the incumbent upmarket. The question “are we serving our most profitable customers?” is a means-ends question. The question “should we be serving different customers entirely?” is a value-rationality question, and the organisation has no structural mechanism for processing it.

Christensen provides the market-level evidence for what Weber described as a civilisational tendency: the progressive displacement of the question “is this the right goal?” by the question “are we achieving this goal efficiently?”

Argyris would recognise the psychological dimension. The defensive routines he documented; the suppression of information that threatens existing strategies, the skilled incompetence that prevents leaders from acknowledging what they do not know; are the human-level expression of the innovator’s dilemma. The data showing that a cheap, inferior product might eventually threaten the core business is precisely the kind of information that defensive routines are designed to suppress. Not through conspiracy, but through the ordinary operations of Model I behaviour: maintain control, suppress negative feelings, be rational, win. When “being rational” means pursuing the higher-margin opportunity, the disruptive threat becomes undiscussable.

2. The RPV Framework: Why Organisations Cannot Do What Their Leaders Decide to Do

Christensen’s second major contribution, less well known than disruption theory but arguably more useful for practitioners, is the RPV framework. An organisation’s capabilities and disabilities are defined by three factors: its Resources, its Processes, and its Values.

Resources are what the organisation has: people, technology, cash, brand, relationships, data. Resources are the most visible and most transferable element. You can hire new people, buy new technology, allocate new budget. Most organisational responses to AI begin here: hire data scientists, license AI platforms, establish an AI centre of excellence. Resources are necessary but not sufficient.

Processes are how the organisation converts resources into outcomes: the patterns of interaction, communication, coordination, and decision-making that have evolved to support the core business. Processes include formal procedures (approval workflows, budgeting cycles, release processes) and informal patterns (how decisions actually get made, who talks to whom, what information flows where). Processes are much harder to change than resources because they are embedded in organisational muscle memory. They are, in Pierre Bourdieu’s terms, the institutional habitus: the dispositions, routines, and taken-for-granted practices that reproduce the organisation’s way of working regardless of what the strategy says.

Values are what the organisation prioritises: the criteria by which employees make decisions about resource allocation. Christensen uses “values” in a specific, non-moral sense. An organisation’s values determine what it finds worth doing: which opportunities to pursue, which customers to serve, which margin thresholds to accept. Over time, values optimise around the organisation’s cost structure and business model. A company accustomed to 40% gross margins will systematically deprioritise opportunities that offer 15% margins, regardless of what the strategy deck says about “new markets” or “transformation.”

The RPV framework explains why adding resources (the typical first response) rarely produces transformation. The new AI team arrives with resources; talented people, modern tools. But it operates within existing processes; the same approval cycles, the same budgeting logic, the same release management. And it is evaluated by existing values; the same margin expectations, the same customer priorities, the same quarterly targets. The result is that the new resources are absorbed into the existing system, producing sustaining improvements to the current business rather than the transformative change the organisation intended.

Anthony Giddens described this as structuration: the way that structures are both produced by and productive of action. The processes and values of the organisation are not inert constraints. They are actively reproduced through the daily decisions of every employee, and through that reproduction they shape what kinds of decisions are thinkable. When the AI team’s budget request goes through the standard capital allocation process, the process itself determines which projects survive. Projects that fit the existing business model score well. Projects that challenge it score poorly. Not because anyone decided to block transformation, but because the process was designed to optimise the existing business, and it does so with impressive reliability.

Talcott Parsons would add that the values dimension operates as a pattern-maintenance function. The organisation’s values, in Christensen’s sense, are the operational expression of what Parsons called the latent pattern-maintenance subsystem: the mechanism by which the organisation preserves its core identity and assumptions against perturbation. The AI initiative is a perturbation. The values system will absorb it, redirect it, and domesticate it until it serves the existing pattern rather than disrupting it.

3. Jobs to Be Done: The Clarity Mechanism That Most Organisations Lack

Christensen’s third major contribution addresses the question that the series has been building toward: how does an organisation get clear on what it should actually be doing?

Jobs to Be Done (JTBD) theory, developed with Bob Moesta and popularised in Competing Against Luck, reframes the fundamental question of purpose. Instead of asking “what products should we build?” or “what markets should we serve?”, it asks: “what job is the customer hiring our product to do?” The “job” is the progress a person is trying to make in a particular circumstance. It has functional dimensions (what the product must do), social dimensions (how the customer wants to be perceived), and emotional dimensions (how the product makes the customer feel).

Christensen’s famous milkshake example illustrates the power of the reframe. A fast-food company wanted to improve milkshake sales. Traditional market research; demographics, taste preferences, competitive analysis; produced incremental improvements that did not move sales. A JTBD analysis revealed that nearly half of milkshakes were sold before 8:30am to commuters who had a long, boring drive ahead and needed something that would occupy them for twenty minutes and keep hunger at bay until lunch. They were not buying a milkshake. They were buying a breakfast companion for a tedious commute. The competitors were not other milkshakes. They were bananas, bagels, doughnuts, and boredom. This reframe produced entirely different design criteria: thickness (to last the drive), chunkiness (to provide interest), portability (to work with one hand on the steering wheel).

The JTBD framework is a purpose clarity mechanism. It forces the organisation to articulate, with precision, what problem it is solving, for whom, under what circumstances. This is exactly the discipline that Peter Drucker demanded when he insisted that the purpose of a business is to create a customer, and that every organisational decision must be traceable to value created for someone outside the organisation. JTBD operationalises Drucker’s principle by providing a specific methodology for discovering what that value actually is.

The connection to specification-driven development is direct. The JTBD framework answers, at the strategic level, the same question that a specification answers at the implementation level: what exactly are we trying to achieve, for whom, under what constraints? A specification that cannot be traced to a job the customer needs done is a specification without purpose. It may be syntactically valid, structurally consistent, and fully tested, and it will still produce something nobody needs.

4. Disruption as a Purpose Problem: Why AI Adoption Gets Stuck

Christensen’s three frameworks, taken together, explain a pattern visible in almost every large enterprise AI programme.

The disruption mechanism predicts that the organisation will invest in AI primarily to improve its existing products and processes; sustaining innovation; rather than to create fundamentally new value propositions. This is not a mistake. It is the rational response of an organisation whose processes and values are optimised for the current business.

The RPV framework predicts that even when leadership mandates transformative AI adoption, the organisation’s processes (budgeting, governance, approval, measurement) and values (margin thresholds, customer priorities, risk tolerance) will redirect that mandate into sustaining activity. The AI centre of excellence will produce efficiency improvements. It will not produce disruption. This is not because the people in the centre are unimaginative. It is because the organisational system within which they operate was designed to optimise the core business, and it does so with the reliability that Taylor’s scientific management always aspired to.

The JTBD framework reveals the deeper problem: most AI programmes do not know what job the AI is being hired to do. “Leverage AI for competitive advantage” is not a job. “Help the underwriting team process applications 40% faster” is closer, but it is still a sustaining improvement to an existing process. “Enable a customer who cannot currently afford specialist financial advice to get personalised guidance at a fraction of the cost” is a job. It is also, in Christensen’s terms, potentially disruptive; and therefore precisely the kind of opportunity the organisation’s values system will deprioritise.

Heifetz would say: the organisation is treating an adaptive challenge as a technical one. AI adoption is not a technical challenge of implementing tools. It is an adaptive challenge of discovering what the organisation should become, which requires changes in values, habits, and identity that cannot be specified in advance. The JTBD framework provides a method for that discovery, but the method itself requires the organisation to ask questions that its current structure is designed to prevent.

Richard Normann provides a strategic framing. His concept of the Prime Mover; the leader who reorganises the entire value constellation rather than competing within the existing one; maps directly onto Christensen’s distinction between sustaining and disruptive innovation. The leader who uses AI to make existing processes faster is competing within the constellation. The leader who uses AI to reconfigure the relationships between human expertise, machine capability, and customer self-service is attempting ecogenesis. Normann’s insight is that the second path requires conceptual elegance, not just organisational energy. You cannot disrupt your way to a new value constellation by doing the same things faster. You must reframe what is being done, for whom, and why.

5. The Separation Solution and Its Organisational Cost

Christensen’s prescription for the innovator’s dilemma is structural: create a separate organisational unit with its own processes and values, free from the gravitational pull of the core business. The separate unit must have its own resource allocation, its own cost structure, its own success metrics, and, critically, its own proximity to the customers whose jobs it is trying to understand. Only the CEO can ensure this separation survives, because only the CEO has the authority to override the core organisation’s natural impulse to absorb, redirect, or defund the disruptive effort.

This prescription has been widely adopted and widely misapplied. The “innovation lab” or “digital accelerator” is a common organisational response, but many such units fail because they achieve separation without purpose. They are structurally isolated from the core business but have not done the JTBD work to identify what job they are actually solving.

Weick adds a dimension that Christensen underplays. The separate unit must not only have different processes and values. It must have different sensemaking frameworks. The people in the unit must be able to perceive opportunities that are invisible to the core organisation. This requires not just structural separation but cognitive separation: different mental models, different frames of reference, different criteria for what counts as interesting. Weick’s concept of enacted sensemaking suggests that what the unit does will determine what it sees. If it is doing demonstrations for the executive committee, it will see opportunities that impress executives. If it is sitting with non-consumers trying to understand their struggles, it will see opportunities that serve unmet needs.

Stacey would challenge the entire notion of a planned separation. In his framework, genuine innovation emerges from patterns of interaction that nobody designs. The separate unit is itself a designed response to an emergent phenomenon, and designing the response risks domesticating the very novelty it aims to cultivate. This is not a fatal objection; Christensen would argue that the separate unit creates the conditions for emergence that the core organisation suppresses. But it is a caution: the unit’s purpose cannot be designed from above. It must be discovered through the iterative, messy, failure-rich process of doing actual work with actual customers on actual problems.

6. What Christensen Gets Wrong, and What the Critics Reveal

Christensen’s work has attracted serious criticism, most notably from historian Jill Lepore, who questioned the evidentiary basis of his case studies and argued that disruption theory lacks predictive power. Christensen himself acknowledged that disruption theory is frequently misapplied; that not every competitive threat is disruptive, that not every startup will defeat the incumbent, and that incumbents sometimes respond successfully.

The most substantive criticism, for this series, is not about whether the theory predicts correctly. It is about what the theory assumes about organisational agency. Christensen’s disruption mechanism is structural and deterministic: the incumbent’s processes and values make an adequate response nearly impossible. This leaves little room for the kind of organisational learning that Argyris and Senge describe, in which organisations can, under the right conditions, surface their own assumptions and change their own behaviour. Christensen’s prescription; create a separate unit; is a structural workaround for a learning failure. It does not address the learning failure itself.

Dweck’s research suggests that the “fixed” quality Christensen attributes to organisational values may be more malleable than his theory implies. A fixed mindset treats capability as static; the organisation’s values are what they are, and the only response is structural separation. A growth mindset treats capability as developable; perhaps the organisation can learn to hold multiple value systems simultaneously, to evaluate opportunities against both sustaining and disruptive criteria. This is not easy. But dismissing it as impossible may be premature.

7. What Christensen Contributes to the Series

For AI transformation specifically, Christensen contributes three things that the thinkers already reviewed do not provide.

First, a market-level explanation for why organisations invest in AI and still fail to transform. The disruption mechanism explains this not as a leadership failure or a cultural problem but as a structural consequence of rational management within an optimised system. This complements the psychological explanations (Argyris, Dweck), the sociological explanations (Bourdieu, Giddens), and the systems explanations (Beer, Stacey) already in the series.

Second, the RPV framework provides a diagnostic tool that connects market dynamics to organisational capability. When a CTO asks “why can we not adopt AI more effectively?”, the RPV framework directs attention away from resources (where most organisations look first) and toward processes and values (where the actual constraints operate).

Third, Jobs to Be Done provides a methodology for the purpose clarity that the series has identified as the precondition for effective action. It is not sufficient on its own; it must be connected to domain modelling, to specification-driven development, and to the organisational learning conditions documented in the first phase. But it provides the strategic starting point: before you can specify what to build, you must understand what job the customer needs done.

The synthesis is this: Christensen explains why organisations rationally fail to disrupt themselves. The series’ earlier thinkers explain the psychological, social, and structural mechanisms through which that failure is enacted. And the JTBD framework, connected to the specification practices described in the Deciding phase, provides a path from vague aspiration to precise purpose. The organisation that can answer “what job is the customer hiring us to do?”, trace that answer through domain models into bounded contexts, and express those contexts as specifications precise enough for AI to act on, has solved the clarity problem. The organisation that cannot is producing strategy decks.

8. LLMs as Disruptors: When the New Entrant Improves Faster Than the Theory Predicted

Christensen’s disruption mechanism assumes a particular tempo. The new entrant starts inferior, improves along a sustaining trajectory, and eventually meets mainstream needs. In the cases he studied; disk drives, steel minimills, discount retail; that trajectory took years, sometimes decades. Incumbents had time. They chose not to respond, but they had time.

Large language models have compressed that timeline to the point where Christensen’s own framework needs updating.

In March 2025, the AI evaluation organisation METR published research showing that the length of software engineering tasks AI models can complete autonomously has been doubling approximately every seven months since 2019. By January 2026, METR’s updated analysis showed the post-2023 trend had actually accelerated: the doubling time had shortened to roughly 89 days. In late 2025, Anthropic’s Claude Opus 4.5 demonstrated the ability to independently complete tasks that would take a human professional approximately five hours; a capability that exceeded even the exponential trend’s predictions.

This is not the slow, steady upmarket march that Christensen documented. This is a capability frontier that shifts faster than any organisational planning cycle can track. And it is producing disruption patterns that are both recognisable from Christensen’s framework and fundamentally different in their dynamics.

Customer service: the Klarna experiment. Klarna, the Swedish fintech company, ran what amounts to a live test of AI disruption against its own operations. In 2023, the company partnered with OpenAI and deployed an AI assistant that handled 75% of customer chats; approximately 2.3 million conversations in over 35 languages within its first month. CEO Sebastian Siemiatkowski claimed the AI was doing the work of 700 customer service agents. Klarna stopped hiring, let attrition reduce headcount from 5,500 to roughly 3,400, and publicly declared that AI could already do all human jobs.

By mid-2025, Klarna reversed course. Customer satisfaction had dropped. The AI handled routine queries well but failed on anything requiring empathy, nuance, or complex problem-solving. Siemiatkowski admitted the company had “focused too much on efficiency and cost” and that the result was “lower quality.” Klarna began rehiring human agents in a freelance model while repositioning AI as a support tool rather than a replacement.

Christensen would recognise the pattern instantly. Klarna treated a disruptive technology as if it were a sustaining one; as if you could swap it into the existing value chain and get the same outcomes at lower cost. But the “job” the customer was hiring customer service to do was not “answer my question quickly.” It was “make me feel heard, resolve my specific situation, and give me confidence that someone is accountable.” The AI performed the functional dimension of the job but failed the social and emotional dimensions. JTBD analysis would have predicted this. Klarna’s values; margin improvement, headcount reduction, operational efficiency; were precisely the sustaining-innovation values that Christensen warned would misdirect the application of a disruptive technology.

But here is what makes LLM disruption different from disk drives. When Klarna reversed course, the AI had not stopped improving. The models available in early 2026 are substantially more capable than those Klarna deployed in 2024. The question is not whether AI customer service failed. It is whether the failure was permanent or temporary; whether the gap between what the AI could do and what the job required is closing at the rate METR’s trend implies. If it is, then Klarna’s reversal is not a vindication of human customer service. It is an interlude.

Content creation: the Duolingo acceleration. Duolingo’s AI-first pivot illustrates the production-side disruption that Christensen’s theory handles well. In April 2025, CEO Luis von Ahn announced that the company would “gradually stop using contractors to do work that AI can handle.” Within days, Duolingo launched 148 new AI-created language courses; content that would previously have taken a decade to produce manually was completed in under a year.

This is classic Christensen: the AI produces output that is cheaper, faster, and initially somewhat lower quality (”we’d rather move with urgency and take occasional small hits on quality,” von Ahn wrote). The contractors, like Christensen’s incumbent, were serving the existing market well. But the AI’s cost structure makes a fundamentally different value proposition possible; not marginally better courses, but dramatically more courses, covering languages and markets that could never justify the cost of human content creation. The non-consumers; learners of minority languages, niche combinations, low-revenue markets; become accessible. That is textbook low-end and new-market disruption operating simultaneously.

Software development: disruption within disruption. The AI coding tools market reveals something Christensen’s framework did not anticipate: disruption happening so fast that the disruptors are being disrupted before the incumbents have finished responding.

GitHub Copilot, backed by Microsoft, launched in 2021 and by 2025 had reached 20 million users and deployment in 90% of Fortune 100 companies. It is, in Christensen’s terms, the incumbent’s response to AI-assisted development; integrated into the existing IDE ecosystem, designed to sustain existing workflows by making them faster. Copilot is a sustaining innovation. It helps developers do what they already do, more efficiently.

Cursor, built by four MIT graduates, took a different approach. Rather than bolting AI onto an existing editor, they forked VS Code and rebuilt it as an AI-native environment where the model understands entire repositories and makes coordinated changes across multiple files. Cursor reached a $29.3 billion valuation in under two years and hit $1 billion in annualised revenue. Meanwhile, Claude Code introduced a third paradigm: terminal-based agentic coding where you hand a task to the AI and it comes back done.

The market fragmented into a near three-way split between Copilot, Cursor, and Claude Code within 18 months. This is not the multi-year disruption cycle of steel minimills. This is architectural disruption at startup speed, where even the disruptors cannot establish a stable position before the next paradigm arrives.

And here is the uncomfortable finding: a 2025 METR randomised controlled trial of 16 experienced open-source developers found that those using AI coding tools were 19% slower than those without; while believing they were 20% faster. The gap between perceived and actual productivity was nearly 40 percentage points. The industry built on the premise that AI makes programmers dramatically more productive has yet to demonstrate that claim under controlled conditions. Christensen would note that this is precisely the kind of evidence that defensive routines are designed to suppress: the data that challenges the strategic premise on which billions of investment depend.

Legal services: the slow-motion disruption. Legal work follows a different tempo. By early 2026, 64% of legal organisations reported actively integrating LLMs into their workflows, primarily for document review, research, and compliance. The technology handles the low-end work; summarising cases, drafting standard clauses, identifying relevant precedent; while human lawyers retain the high-value work of strategy, advocacy, and judgment.

This looks like the early stages of a textbook Christensen disruption. The AI enters at the bottom of the market, doing work that is too routine or low-margin for experienced lawyers to justify their time on. The legal profession rationally focuses on higher-margin advisory work. Meanwhile, the AI improves. Companies like Darrow and Harvey are building legal-specific AI systems that perform tasks once requiring qualified professionals. The question Christensen would pose: at the current rate of improvement, when does the AI’s capability meet the mainstream client’s needs for all but the most complex matters?

The legal profession’s response so far has been classic incumbent behaviour: absorb the technology as a sustaining tool (AI-assisted lawyers do more billable work), invest in higher-margin services, and move upmarket. Christensen’s framework predicts exactly what happens next.

What LLMs reveal about disruption theory itself. These examples expose a limitation in Christensen’s original framework that matters for anyone leading transformation.

His model assumes that the pace of disruption is slow enough for organisations to choose between responding and not responding. The incumbent has time to study the threat, evaluate the technology, create a separate unit, discover the right jobs to be done, and build a new business model.

When capability doubles every seven months, or every 89 days, that assumption collapses. The planning cycle for creating a separate organisational unit; identifying the opportunity, securing sponsorship, recruiting a team, establishing different processes and values; takes longer than a full doubling of the disruptive technology’s capability. By the time the separate unit is operational, the technology it was designed to exploit has moved on.

This does not invalidate Christensen. It radicalises him. If the innovator’s dilemma was already difficult when disruption took years, it becomes structurally impossible when disruption takes months. The RPV framework becomes not just a diagnostic but an alarm: every month that existing processes and values constrain the organisation’s response, the gap between what the AI can do and what the organisation permits it to do widens. Stacey’s insight becomes more urgent: in conditions of genuine novelty, the only viable strategy is continuous experimentation at the pace of change, not planned responses to yesterday’s capability.

The organisation that treats AI transformation as a programme with a fixed scope and a multi-year roadmap is making the innovator’s error at double speed. The organisation that builds the capacity for continuous, small-scale experimentation; what Weick called “small wins” has a chance of keeping pace. Not because it can predict where the technology is going, but because it can respond to where the technology actually is, at the tempo the technology demands.

(An Organisational Prompt is something you can do now...)

The Cannibalisation Question

Find the person who controls your AI initiative’s budget. Ask them: “If this initiative could create a new revenue stream worth £2 million but required cannibalising an existing stream worth £8 million, would you approve it?”

The speed of the answer tells you more than the answer itself. If there is hesitation, qualification, or a redirect to “let’s discuss that offline,” your organisation’s values permit sustaining innovation only. That is not a moral failing; it is a structural fact. But it means your AI transformation will produce efficiency gains, not disruption, regardless of what the strategy deck promises.

Further Reading

Clayton Christensen: The Innovator’s Dilemma: When New Technologies Cause Great Firms to Fail - The foundational work. Read it for the disruption mechanism and the RPV framework. The disk drive case studies remain the most rigorous exposition; the principles apply without modification to AI adoption in enterprises.

Clayton Christensen, Michael Raynor: The Innovator’s Solution: Creating and Sustaining Successful Growth - The prescriptive companion to The Innovator’s Dilemma. Read it for the structural responses to disruption, including the separation strategy and the RPV diagnostic applied to new growth businesses.

Clayton Christensen, Taddy Hall, Karen Dillon, David Duncan: Competing Against Luck: The Story of Innovation and Customer Choice - The most complete statement of Jobs to Be Done theory. Read it for the methodology of purpose clarity; the milkshake story is chapter one, but the depth of the framework emerges across the full text.

Clayton Christensen, Michael Horn, Curtis Johnson: Disrupting Class: How Disruptive Innovation Will Change the Way the World Learns - Disruption theory applied to education. Read it for the pattern of applying the theory beyond commercial markets, and for the insight that modular architectures enable disruption in knowledge-intensive domains.

Clayton Christensen: How Will You Measure Your Life? - Christensen applies his business frameworks to personal decisions. Read it for the argument that the same structural forces that cause corporate failure; optimising for short-term metrics, neglecting investments that do not show immediate returns; operate in individual careers.

Jill Lepore: The Disruption Machine - The most prominent critique of disruption theory. Read it for the evidentiary challenges, the limitations of the case study method as applied to prediction, and the broader cultural implications of making “disruption” a managerial imperative.

METR: Measuring AI Ability to Complete Long Tasks - The time-horizon metric showing that the length of software tasks AI agents can complete autonomously has been doubling approximately every seven months since 2019. Essential context for understanding why LLM disruption operates on a fundamentally compressed timeline. Updated in Time Horizon 1.1 (2026), which shortened the post-2023 doubling time to approximately 89 days.

I write about the industry and its approach in general. None of the opinions or examples in my articles necessarily relate to present or past employers. I draw on conversations with many practitioners and all views are my own.