How the Idea of Falsification Shapes Our Thinking About Discovery

Karl Popper Argues That You Cannot Know What Your Strategy Will Achieve, Only What Would Prove It Wrong? Is that Right?

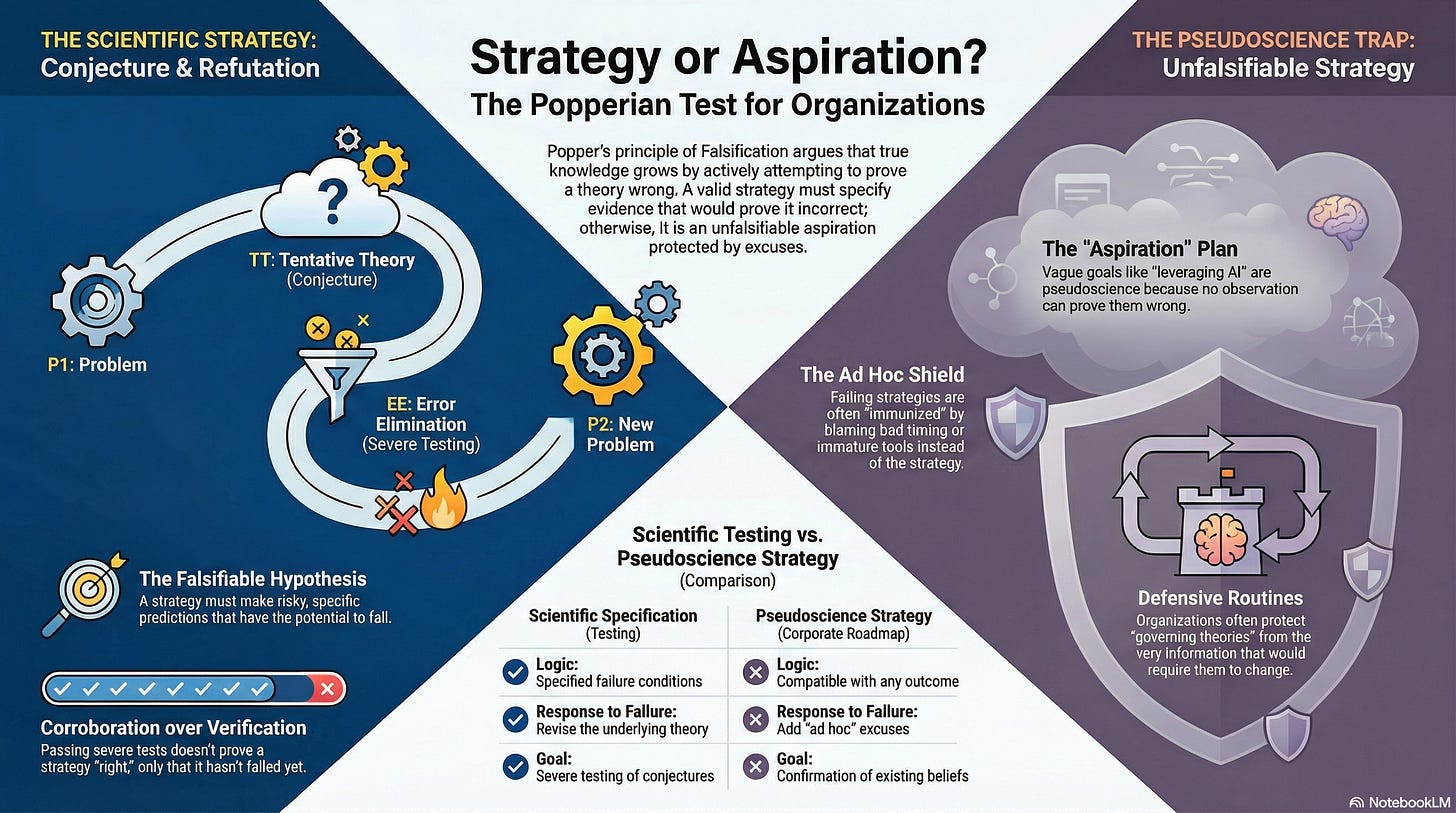

Your organisation has a strategy. It probably has a vision statement, a set of objectives, a roadmap, and a quarterly review cycle. If it is an AI transformation programme, it has something like: “Leverage AI to drive innovation and efficiency across the enterprise.” The budget was allocated. The work began.

Now ask a question: what would prove it wrong?

Not “what would slow it down” or “what risk might materialise.” What evidence, observed in the real world, would cause the leadership team to conclude that the strategy itself, the theory of value creation sitting beneath the roadmap, is incorrect? What specific outcome(s), if they occurred, would falsify the proposition that for instance, “AI-assisted specification writing will reduce rework and therefore increase productivity by X%”, or that deploying large language models in the underwriting process will improve decision quality, etc. etc.

If you cannot answer that question, then according to Karl Popper, you do not have a good theory.

Strategy IS a theory. It is a theory about how value will be created. And you need a mechanism for learning whether it is working.

Karl Popper (1902-1994) was an Austrian-British philosopher who spent six decades arguing that humanity’s most powerful method for producing reliable knowledge, science, works not by proving things true but by proving things false. Born in Vienna, peripherally connected to the Vienna Circle of logical positivists but rejecting their core doctrines, he fled the rise of Nazism and spent the war years in New Zealand writing The Open Society and Its Enemies (1945). He moved to the London School of Economics in 1946, where he became Professor of Logic and Scientific Method, and was knighted in 1965. His influence extends far beyond academic philosophy: his student George Soros named his philanthropic foundation the Open Society Institute in Popper’s honour; the biologist Peter Medawar called him “incomparably the greatest philosopher of science that has ever been”; and in 1993, the United States Supreme Court cited his concept of falsifiability as the legal standard for determining whether expert testimony qualifies as scientific knowledge. No other philosopher of science has had his ideas written into case law. That’s not a bad CV.

Popper’s falsificationism has been subjected to rigorous critique by Kuhn, Lakatos, Feyerabend, Quine, and others (who will be the subject of a future article which takes this in a different direction) who argue, with considerable force, that it describes how science should work rather than how it actually does, and that even the “should” is more complicated than the elegant formulation suggests.

The Duhem-Quine problem (when a prediction fails, you cannot know whether the theory or the auxiliary assumptions are at fault) is real and consequential. This has so many links to strategic post-mortems!) These criticisms matter and are addressed later. What follows treats Popper as the thinker who gave the modern world its dominant account of what makes knowledge reliable, asks why that account has become so deeply embedded in public consciousness that most educated people now think “scientific” and “falsifiable” are synonyms, and then asks what happens when organisations adopt the vocabulary of testing without the discipline of actually specifying what would count as failure.

1. The Problem That Started Everything: How Do You Tell Science from Pseudoscience?

Popper’s motivating problem was not abstract. As a young man in Vienna in the early 1920s, he encountered three theories that claimed the authority of science: Einstein’s general relativity, Freud’s psychoanalysis, and Adler’s individual psychology. He also encountered Marx’s theory of history, which claimed to predict the future development of society on scientific grounds. All four were intellectually sophisticated. All four had devoted followers. All four produced explanations of observed phenomena that their proponents found compelling.

But Popper noticed something that troubled him. Einstein’s theory was risky. It predicted that light would bend around massive objects; if the 1919 Eddington expedition had observed no bending, the theory would have been refuted. Einstein himself specified what would prove him wrong. He took the risk of being incorrect, and the universe could have punished him for it.

Freud and Adler were different. Whatever a patient did, the theory could explain it. A man risks his life to save a child: Freud explains it as sublimation; Adler explains it as compensation for feelings of inferiority. A man refuses to risk his life: Freud explains it as repression; Adler explains it as an expression of inferiority. Every observation confirmed the theory. No conceivable observation could refute it. The theory was, in Popper’s terminology, unfalsifiable: not because it was false, but because it was compatible with any possible state of affairs. It excluded nothing, and therefore, by Popper’s argument, it explained nothing.

Marxism sat somewhere between the two. It had originally made specific, testable predictions about the course of history: the progressive immiseration of the working class, revolution in the most advanced industrial economies. When these predictions failed (revolution came to agrarian Russia, not industrial England; living standards in capitalist economies rose rather than fell), Marx’s followers did not revise the theory. They added protective modifications: false consciousness explained why workers did not revolt; imperialism explained why capitalism had temporarily stabilised. Each modification made the theory compatible with the new evidence. Each modification made it less falsifiable. Marxism had begun as science and degenerated into what Popper would call pseudoscience: not because it was necessarily wrong, but because its proponents had made it impossible to be shown wrong. [Note: I have different views from this on the role of grand explanatory theories like Marxism which I will get into in a later article.]

This gave Popper his demarcation criterion: the line between science and non-science is not the line between truth and falsehood, nor between the observable and the speculative. It is the line between theories that take the risk of being refuted by observation and theories that do not. A theory is scientific if, and only if, it specifies what would count as evidence against it. The power of a theory lies not in what it can explain, but in what it forbids.

2. The Logic: Why You Can Never Prove You Are Right, But You Can Sometimes Prove You Are Wrong

Popper’s epistemological foundation rests on a logical asymmetry that David Hume had identified a century and a half earlier, but that Popper was the first to take fully seriously as a method.

The asymmetry is this: no number of confirming observations can prove a universal statement true, but a single genuine counter-observation can prove it false. You may observe a thousand white swans. You may observe ten thousand. Each observation is consistent with the proposition “all swans are white.” But none of them proves it, because the next swan you observe might be black. The moment you find one black swan, the proposition is conclusively refuted. Confirmation is logically weak. Refutation is logically decisive.

Most people, including most scientists, work the other way round. They look for evidence that supports their hypothesis. They gather confirming instances. They build a case. Popper argued that this is both logically invalid and practically dangerous, because confirmation bias, the tendency to notice evidence that supports your existing belief and to ignore or reinterpret evidence that contradicts it, makes it trivially easy to find confirmation for almost anything. The person who believes in astrology will find daily confirmations in the newspaper. The executive who believes the AI strategy is working will find confirming data in every steering committee report. The question is not whether confirming evidence exists. It always exists. The question is whether the hypothesis has survived a serious attempt to destroy it.

Popper proposed a radically different account of how knowledge grows. It proceeds not by induction (accumulating evidence until certainty emerges) but by conjecture and refutation: bold guesses subjected to severe tests.

The cycle begins with a problem. Someone proposes a tentative theory to solve it. The theory is subjected to attempts at error elimination: critical discussion, empirical testing, the active search for counter-evidence. The process generates new problems, which provoke new conjectures, which are tested in turn. Knowledge grows from old problems to new problems through cycles of conjecture and refutation. The cycle never terminates. There is no final, certain knowledge. There is only provisional, conjectural knowledge that has survived criticism so far.

A theory that survives severe testing is not verified. It is corroborated: it has withstood attempts at refutation, which is the strongest endorsement Popper will allow. Corroboration is backward-looking. It says that this theory has not yet been shown to be wrong. It makes no promise about the future. The theory that has worked for twenty years may fail tomorrow. The strategy that produced results last quarter may be built on assumptions that the next quarter will expose.

This is why Popper insisted that science does not rest on solid bedrock. It rests, in his vivid metaphor, on piles driven into a swamp: you stop driving them deeper not because you have reached firm ground, but because you are satisfied, for now, that they are firm enough to carry the structure.

3. The Seepage: How Falsification Became the Public Definition of Science

Popper’s ideas are not confined to philosophy departments. They have seeped into public consciousness more deeply than those of any other philosopher of science, to the point where most educated people now equate “scientific” with “testable” and “testable” with “falsifiable,” without knowing they are channelling Popper.

The seepage happened through several channels simultaneously.

The first was Peter Medawar. The Nobel laureate biologist was one of the most gifted science communicators of the twentieth century, and he was an outspoken Popperian. In his BBC Reith Lectures of 1959, his popular books, and his public appearances, Medawar consistently credited Popper for the success of science. Richard Dawkins, in turn, credited Medawar as the model of how a scientist should think and communicate. Through this chain, Popperian falsificationism became the working philosophy of science for a generation of publicly engaged scientists, from Dawkins to Carl Sagan. When a popular science communicator says “science works by trying to prove itself wrong,” they are stating Popper’s position, usually without attribution.

The second channel was education. The account of the scientific method taught in schools across the English-speaking world, formulate a hypothesis, design an experiment to test it, see if the results falsify it, is a simplified Popperian account. It is so ubiquitous that most people who studied science at school believe this is simply what science is, not that it is one philosopher’s contested account of what science should be. The vocabulary of hypothesis testing, falsification, and the null hypothesis has become the default framework through which laypeople understand scientific practice.

The third, and perhaps most consequential, channel was the law. In 1993, the United States Supreme Court decided Daubert v. Merrell Dow Pharmaceuticals, a case about whether expert scientific testimony was admissible as evidence. The Court, citing Popper directly, ruled that falsifiability was a key factor in determining whether expert testimony qualifies as scientific knowledge. The ruling established what is now called the Daubert standard, which requires federal judges to evaluate the reliability of scientific evidence partly by asking whether the theory or technique in question “can be (and has been) tested.” Chief Justice Rehnquist noted in his partial dissent that he was unsure what falsifiability actually meant and suspected other federal judges would be equally uncertain. His concern was prescient. The Daubert standard has been applied in thousands of cases across the United States; it has shaped how pharmaceutical evidence is admitted, how forensic techniques are evaluated, and how environmental science is weighed in litigation. Popper’s philosophical concept became a legal instrument with practical consequences for people’s lives and livelihoods.

The cumulative effect is this: Popper won the public argument about what science is, even as professional philosophers of science were dismantling his position. Most working scientists, if asked to describe the scientific method, will give an account that is recognisably Popperian. Most philosophers of science, if asked whether Popper’s account is adequate, will say it is not. The gap between the popular understanding and the professional critique is one of the most significant disconnects in contemporary intellectual life.

4. Why It Matters for Organisations: The Unfalsifiable Strategy

The seepage of Popperian language into public consciousness has an unexpected organisational consequence: leaders who use the vocabulary of testing without the discipline of specifying what would count as failure.

Consider the typical AI transformation strategy. “We will leverage AI to drive innovation and efficiency across the enterprise.” What observation would refute this? What would the leadership team accept as evidence that the strategy is wrong, not merely that the implementation is slow, the tools are immature, or the teams are not yet ready?

Nothing. No conceivable outcome refutes the strategy, because it excludes nothing. If AI-assisted development reduces rework, the strategy is confirmed. If it does not reduce rework, the team was not ready, the tool was not the right one, or the process was not mature. Each of these is what Popper would call an ad hoc hypothesis: a protective modification that preserves the original theory from refutation by explaining away the disconfirming evidence. It is the move that Chris Argyris identified as the core of defensive routines: the organisation protects its governing theory from the information that would require it to change.

This is not a trivial parallel. The entire structure of double-loop learning, Argyris’s central contribution, is Popperian in form. Single-loop learning adjusts actions within the existing theory: the thermostat that turns the heating on when the temperature drops below a set point. Double-loop learning questions the theory itself: perhaps the set point is wrong; perhaps the building needs insulation rather than heating. In Popper’s terms, single-loop learning operates within the current conjecture; double-loop learning asks whether the conjecture has been refuted.

Most organisations, as Argyris spent forty years demonstrating, are structurally incapable of double-loop learning. They do not specify what would falsify their strategy. They do not design severe tests. They do not welcome disconfirming evidence. They do the opposite: they treat the strategy as verified by the act of approving it, and then protect it with ad hoc explanations whenever reality fails to cooperate. The budget was insufficient. The market shifted. The technology was not mature. The governance was not in place. Each explanation may be locally true. The pattern of producing them is the problem.

5. The Emotional Cost of Falsification: Why Organisations Resist It

Popper’s epistemology is logically compelling and psychologically brutal. Welcoming the refutation of your beliefs requires precisely the kind of emotional resilience that Seligman, Dweck, and Kegan describe, and that most organisational cultures actively discourage.

In Dweck’s terms, Popper demands a growth mindset applied to strategy. A fixed mindset treats the current strategy as a reflection of the leader’s competence: if the strategy is wrong, the leader is inadequate. A growth mindset treats the strategy as a conjecture: if it is wrong, the leader has learnt something. The difference is not just attitudinal. It determines what information the organisation is capable of processing.

Ronald Heifetz’s distinction between technical and adaptive challenges maps precisely onto Popper. Technical challenges are problems that can be solved within the current theory: optimise the prompt, retrain the model, adjust the workflow. Adaptive challenges require revising the theory itself: perhaps the AI strategy is wrong not because the implementation is faulty but because the underlying assumptions about where value lies are incorrect. Popper’s method is the method for adaptive work: make the assumptions explicit, specify what would refute them, test them, and revise.

But Heifetz also explains why this is so difficult. Adaptive work generates anxiety, because it asks people to question beliefs that are connected to their professional identity. The Architect whose career was built on a particular architectural philosophy cannot easily welcome evidence that the philosophy is obsolete. The transformation director whose bonus depends on the programme’s success cannot easily specify what would count as failure. The organisation that has publicly committed to an AI strategy cannot easily admit that the strategy’s core hypothesis was wrong. Falsification of professional identity is not the same as falsification of a scientific theory; it involves loss, grief, and what Anthony Giddens (to be discussed shortly) calls ontological insecurity, the threat to the taken-for-granted assumptions that make daily professional life possible.

Ralph Stacey would add that the anxiety is not merely individual. It is relational. Organisations are patterns of interaction, and the governing strategy is one of the patterns that holds those interactions in place. To falsify the strategy is to destabilise the pattern, and people will defend the pattern not because they believe the strategy is correct, but because the pattern provides the predictability that makes organisational life bearable. The ad hoc hypothesis is not just a logical error. It is a defence against the anxiety of not knowing what comes next.

Ron Westrum’s typology of organisational cultures provides the clearest frame. A pathological culture suppresses the messenger: the person who brings disconfirming evidence is punished. A bureaucratic culture ignores the messenger: the disconfirming evidence is processed through channels that strip it of urgency and specificity. A generative culture trains the messenger: the disconfirming evidence is actively sought, welcomed, and used to revise the organisation’s conjectures. Popper’s method requires a generative culture. Most organisations operate at the bureaucratic level: not actively hostile to disconfirming evidence, but structurally incapable of processing it fast enough to revise the theory before the next quarterly review.

6. Popper and Specification: Why a Good Specification Is a Conjecture

For technologists, Popper’s deepest contribution is not about strategy at all. It is about the nature of a specification.

A specification, properly written, is a Popperian conjecture. It says: “We believe the system should behave in this way, under these conditions, producing these outcomes.” A test suite is a set of attempts at refutation: each test case specifies conditions under which the specification would be proved wrong. The system is corroborated when it passes the tests: not verified, because there may be conditions the tests did not cover, but corroborated, meaning it has survived the attempts at falsification that the team could devise.

The specification is the conjecture. The acceptance criteria are the potential falsifiers. The implementation is an attempt to produce behaviour consistent with the conjecture. The tests are the severe tests. When a test fails, the specification (or the implementation, or both) has been falsified, and the cycle of revision begins.

The implication for AI-augmented development is direct. When a developer writes a specification and asks an AI model to generate the implementation, the specification functions as the conjecture and the test suite functions as the falsification mechanism. If the test suite is weak (few tests, no edge cases, no adversarial conditions), the implementation is weakly corroborated: it might be correct, but nobody has tried hard enough to prove it wrong. If the test suite is severe (comprehensive coverage, edge cases, adversarial prompts, boundary conditions), the implementation that survives it is strongly corroborated.

This reframes the entire debate about AI-generated code quality. The question is not “Can AI write correct code?” (a verification question, logically unanswerable). The question is “Has anyone tried to prove that the AI-generated code IS wrong / ineffective?” (a falsification question, practically answerable). The quality of AI-generated code is a function of the severity of the tests it has survived, not the confidence with which it was produced.

7. The Open Society and the Open Organisation

Popper’s political philosophy, developed in The Open Society and Its Enemies, is the direct political consequence of his epistemology. If knowledge is always provisional, if no theory can be verified, and if the best we can do is identify and eliminate errors, then any political system that claims to possess certain knowledge of the correct social arrangement is intellectually fraudulent and practically dangerous.

Popper attacked Plato, Hegel, and Marx as intellectual ancestors of totalitarianism: thinkers who believed they had identified the laws of history or the blueprint of the ideal society, and whose followers therefore felt justified in imposing that blueprint on others by force. Against this, Popper argued for what he called piecemeal social engineering: small, reversible, testable interventions in social arrangements, each designed to solve a specific problem, each subject to evaluation and revision. Not utopian transformation. Not comprehensive blueprints. Not five-year plans. Instead, the social equivalent of conjecture and refutation: try something, observe the result, revise. The parallel to organisational transformation is startling and the links to Stacey, Snowdon etc are obvious - they say the same thing when applied to small testable experiments (probes).

The open organisation, like the open society, is one that treats its strategies as conjectures, its structures as provisional, and its failures as information rather than evidence of blame. Sidney Dekker’s Just Culture, in which failure is treated as data rather than grounds for punishment, is the organisational form that Popperian epistemology requires. A failure is a falsification. It refutes the theory that the current process is adequate. It should be welcomed as information that enables revision. The blame culture is the anti-Popperian culture: it punishes the messenger, thereby ensuring that the organisation never receives the disconfirming evidence it needs to revise its conjectures.

8. The Limits: What Popper Does Not Explain

Popper provides the logic of how organisations should produce and test knowledge. He does not provide the sociology of why they fail to do so, the psychology of why individuals resist it, or the politics of why power structures prevent it.

Kuhn’s critique is the most important. Kuhn argued, in The Structure of Scientific Revolutions (1962), that Popper described only the revolutionary episodes of science and ignored the productive, puzzle-solving work of normal science, the long periods during which scientists operate within an accepted paradigm, refining it rather than questioning it. For organisations, this means that permanent revolution is as dysfunctional as permanent normal science. You cannot falsify everything simultaneously. You need stretches of stable operation in which the current theory is applied, extended, and refined. The challenge is knowing when you are in a period of productive puzzle-solving and when the accumulating anomalies indicate that the paradigm itself has failed.

Lakatos refined Popper’s position by arguing that what matters is not whether individual theories are falsified but whether research programmes are progressive or degenerating. A progressive programme generates novel predictions and new capabilities. A degenerating programme protects its core with ad hoc modifications that explain away failure without generating anything new. This is a more useful diagnostic for organisations than Popper’s simple falsification: the question is not whether any single initiative has failed, but whether the programme as a whole is producing novel capability or merely defending itself.

Feyerabend attacked the entire project. He argued that every methodological rule, including Popper’s, has been productively violated at some point in the history of science. The renegade team on the fourth floor that adopted AI tools without approval, violating the governance framework, may be producing better knowledge than the official programme. If they are, then Popper’s insistence on method is too restrictive. Sometimes progress comes from people who break the rules.

We will turn to Lakatos, Kuhn and Feyerabend in a future article.

9. The Synthesis: Popper for Transformation Leaders

Popper’s gift to the transformation leader is not a methodology. It is a discipline: the discipline of specifying, in advance, what failure looks like.

Every strategy should be expressible as a falsifiable hypothesis. Not “We will leverage AI to drive innovation and efficiency” but “We conjecture that AI-assisted specification writing, where business analysts use LLMs to translate requirements into structured acceptance criteria, will reduce the rate of change requests during development by 40% within the first two delivery cycles.” This is falsifiable. If the rate of change requests does not decrease, the conjecture is refuted. Not the team. Not the tool. The conjecture, which means the theory of value creation that sits beneath the strategy.

Every retrospective should be a falsification exercise. What assumptions were tested? Which were refuted? What new conjectures do we now hold? The discipline is to ask “What did we learn is wrong?” before asking “What did we achieve?”

Organisational Prompt

Take the strategy for any significant change initiative, and subject it to the following Popperian test.

First, rewrite the strategy as a falsifiable hypothesis. Strip out the aspirational language. Replace “leverage,” “drive,” “enable,” and “transform” with specific predictions: what will change, by how much, measured how, by when. If you cannot rewrite it as a falsifiable hypothesis, the strategy is not a theory of strategy. It is a pseudo-strategy.

If this exercise produces discomfort, that discomfort is the point. Popper’s method is logically elegant and emotionally demanding. The organisation that can do it has a chance of learning. The organisation that cannot will continue to produce ad hoc explanations for why the plan is not working, until the plan is quietly replaced by a new plan that will be equally unfalsifiable, and the cycle will repeat.

Further Reading

Karl Popper, Conjectures and Refutations: The Growth of Scientific Knowledge - The clearest single statement of Popper’s method. Start here.

Karl Popper, The Logic of Scientific Discovery - The foundational text. Dense but indispensable.

Karl Popper, The Open Society and Its Enemies - The political consequence of Popper’s epistemology. Essential reading for anyone who wants to understand why testable, revisable institutions matter.

Bryan Magee, Popper - Still the best short introduction to Popper’s philosophy. Sixty pages that will change how you think about knowledge.

Imre Lakatos and Alan Musgrave (eds.), Criticism and the Growth of Knowledge - The proceedings of the 1965 London colloquium where Popper, Kuhn, Lakatos, and Feyerabend debated. The origin of the arguments that shape the rest of the philosophy of science.

Disclaimer

I write about the industry and its approach in general. None of the opinions or examples in my articles necessarily relate to present or past employers. I draw on conversations with many practitioners and all views are my own.