Ackoff: How to Stop Solving the Wrong Problem

Russell Ackoff Argues That You Cannot Decide What to Do Until You Stop Solving the Wrong Problem

Somewhere in your organisation right now, a team is using AI to do the wrong thing faster.

They do not know it is the wrong thing. It was the right thing last year. It might even have been the right thing last quarter. But the process they are accelerating was designed for a world that no longer exists, and the AI is making it more efficient with an enthusiasm that would impress Frederick Taylor. Nobody has asked whether the process itself should exist, because everybody is too busy measuring how much quicker it runs.

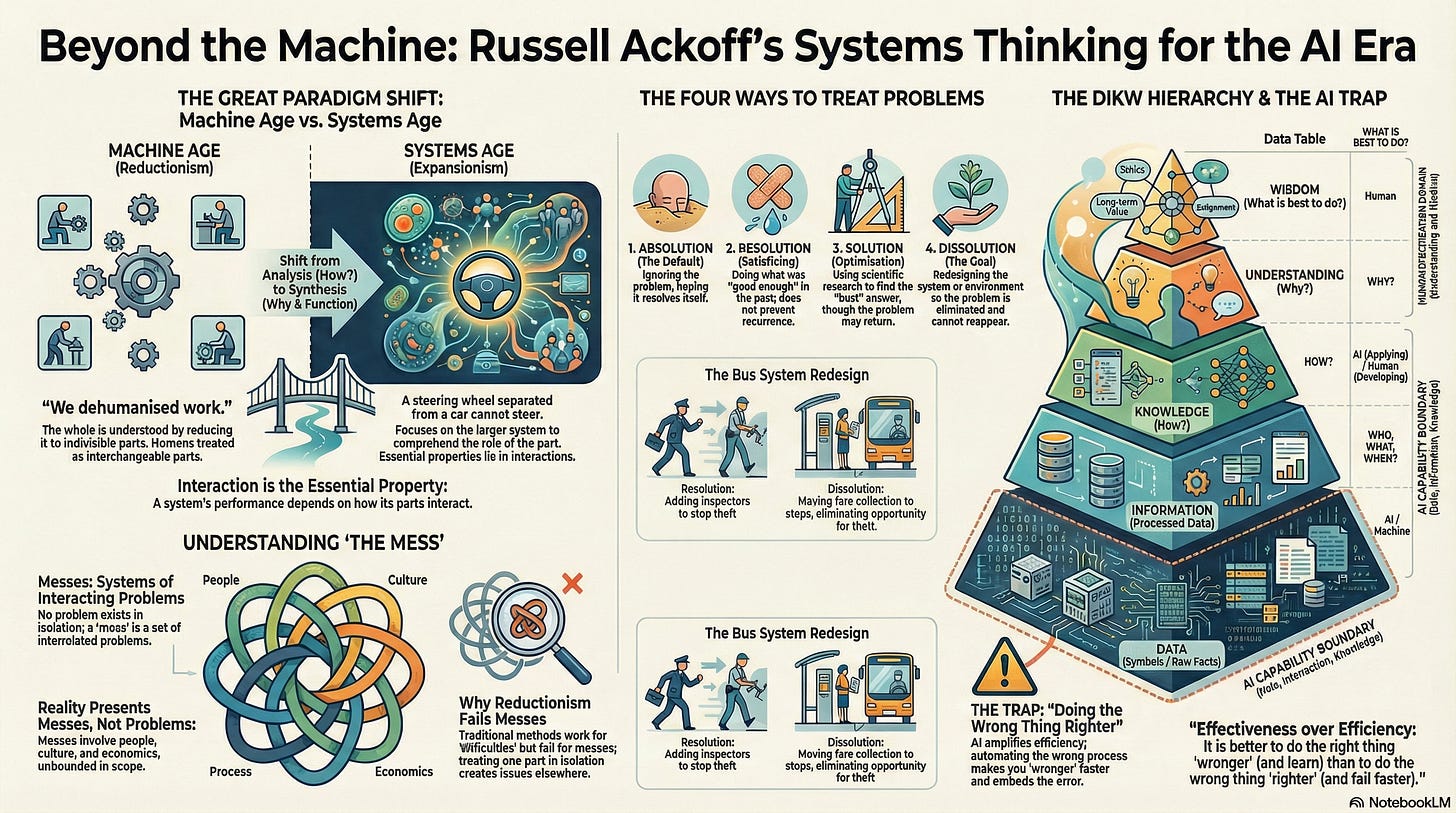

Russell Ackoff, a systems thinker who spent five decades at the Wharton School dismantling the assumptions behind conventional management, had a phrase for this: doing the wrong thing righter. It is, he argued, the defining pathology of modern organisations. And AI has made it lethal, because doing the wrong thing righter at machine speed is how you automate your own obsolescence before anyone notices.

Ackoff’s work spans operations research, organisational theory, and management philosophy. His central contribution to the clarity problem is a set of distinctions so simple they are easy to dismiss and so important they explain why most transformation programmes produce motion without progress. If your AI strategy feels busy but directionless, Ackoff explains why; and he offers a method for escaping the trap that is, characteristically, the opposite of what most consultants would recommend.

1. Messes Are Not Problems

Ackoff coined the term “mess” to describe something that every transformation leader recognises but that no planning methodology adequately addresses: a system of interacting problems where the interactions matter more than the individual components.

A problem can be isolated, analysed, and solved. A mess cannot, because taking it apart destroys the thing you need to understand. The technology question (which models, which infrastructure) interacts with the skills question (who can use it), which interacts with the governance question (who decides), which interacts with the identity question (who am I now that AI does what I used to do), which interacts with the culture question (what gets rewarded), which interacts with the structural question (how teams are organised). Pull any one of these out and “solve” it independently, and you create new problems in the others.

This is not a metaphor. It is a structural claim. Most transformation programmes are a mess in Ackoff’s precise sense. Most organisations treat them as a collection of independent problems: run a skills programme, buy a platform, set up a governance committee, write a strategy document. Each is “solved” independently. The mess persists because nobody has addressed the interactions.

Eric Evans would recognise this at the domain level; his bounded contexts and context maps are tools for making the mess visible within software systems. Heifetz would recognise it at the leadership level; his adaptive challenges are messes that cannot be solved by existing expertise applied to isolated components. Ralph Stacey would add that the interactions are not just complicated but complex; they produce emergent patterns that no amount of upfront analysis can predict.

Ackoff’s contribution is the bluntest version of the diagnosis: reality presents messes, not problems, and the first step toward clarity is admitting that you are in one.

2. Four Ways to Treat a Problem (and Why Three of Them Fail)

Ackoff distinguished four responses to problems, and the distinction is the sharpest diagnostic I know for what is actually happening inside a transformation programme.

Absolution is ignoring the problem and hoping it resolves itself. This is more common than anyone admits. The AI pilot that nobody cancelled but nobody funded past quarter two? Absolution.

Resolution is reaching into the past for something that worked before. It uses experience and common sense to produce a result that is “good enough.” Most AI adoption programmes start here: what did we do for the last technology wave? Stand up a centre of excellence, run some training, publish a playbook. Resolution produces familiarity, not transformation.

Solution is using research and analysis to find the optimal answer. Hire the consultants, commission the benchmarking study, build the business case. Solution is more rigorous than resolution, but both share a fatal assumption: that the problem has been correctly identified. If it has not, the optimal solution to the wrong problem is still wrong.

Dissolution is redesigning the system so that the problem cannot recur. You do not fix the error within existing assumptions; you change the assumptions. Ackoff considered this the highest form of problem treatment, and the rarest. Argyris’s double-loop learning is dissolution applied to cognition; you do not correct the error within the governing variables, you change the governing variables themselves. Normann’s reframing is dissolution applied to mental models; you do not solve the problem within the existing map, you redraw the map so the problem disappears.

Consider a concrete example. Your development teams are producing AI-generated code whose output requires extensive review because the specifications are vague. Resolution: add more reviewers. Solution: deploy AI-assisted code review tools. Dissolution: redesign the development process so that specifications are precise enough that generated code does not need extensive review. The first two responses accept the existing system and try to manage its consequences. The third changes the system so the consequences do not arise.

The specification-driven development approach this series has been building toward is, in Ackoff’s terms, a dissolution strategy. It does not solve the problem of bad AI output. It (helps to) eliminate the conditions that produce it.

3. Doing the Wrong Thing Righter

This is Ackoff’s most famous line, and it deserves to be quoted in full:

“All of our social problems arise out of doing the wrong thing righter. The more efficient you are at doing the wrong thing, the wronger you become. It is much better to do the right thing wronger than the wrong thing righter. If you do the right thing wrong and correct it, you get better.”

The distinction is between effectiveness (doing the right thing) and efficiency (doing the thing right). Most organisations focus on efficiency. AI supercharges the trap.

If your customer service process is built around the wrong assumptions about what customers actually need, an AI chatbot will deliver the wrong service experience more efficiently. If your software development process produces specifications that miss the domain, AI code generation will produce the wrong software faster. If your decision-making process optimises against the wrong metrics, AI analytics will optimise the wrong outcomes with greater precision. In every case, the AI is performing brilliantly. The problem is upstream.

Beer’s POSIWID principle (”the purpose of a system is what it does”) is the diagnostic that reveals whether you are in this trap. If the actual output of your AI programme differs from its stated purpose, you are doing the wrong thing righter. Drucker would add the prior question: have you asked “what is our business?” recently, or are you assuming the answer has not changed? Christensen demonstrated with mechanical precision what happens to organisations that never ask: they rationally optimise their way into irrelevance.

4. Idealized Design: The Question Nobody Asks

Ackoff’s most practical tool for getting to clarity is idealised design, and it begins with a thought experiment that most leadership teams find simultaneously liberating and terrifying.

Assume that your organisation was destroyed last night. The environment, the customers, the market, the technology, the talent pool; all still exist. But the organisation itself is gone. Now design the organisation you would create to replace it, subject to only two constraints: it must be technologically feasible (no science fiction) and operationally viable (it could actually work). One additional requirement: the design must incorporate the ability to learn and adapt rapidly.

The concept emerged at a 1951 Bell Laboratories conference when a vice president opened the session by saying: “Gentlemen, the telephone system of the United States was destroyed last night.” That hypothetical destruction freed participants to design what they actually wanted rather than incrementally improving what existed. The distinction matters enormously. Incremental improvement starts from the current state and asks “what can we change?” Idealised design starts from a blank page and asks “what would we build?” The first is constrained by everything the organisation already is. The second is constrained only by physics and viability.

For AI transformation, the question becomes: if this organisation were rebuilt from scratch today, with full access to current AI capabilities, what would it look like? This is not the question most AI strategies ask. Most ask “where can we add AI to our existing processes?” That is preactive planning at best; predicting where AI will help and preparing for it. Ackoff would say it is the wrong question, because it preserves the existing structure and merely decorates it with new technology.

Mintzberg would rightly challenge idealised design as too deliberate; real strategy, he showed, emerges from accumulated action rather than implemented plans. The reconciliation is that idealised design is not a blueprint. It is a direction. The design is continuously revised through experience. What Ackoff provides is the destination that gives emergent action its coherence; without it, emergence is just drift.

5. From Data to Wisdom: Where AI Stops and Humans Must Start

Ackoff’s 1989 paper “From Data to Wisdom” formalised a hierarchy that has become so widely cited it has lost its bite. But for AI transformation, his original five-level version (not the four-level version most people know) is the clearest framework available for understanding where the human specification work sits.

Data is symbols. Information answers who, what, where, when, how many. Knowledge is know-how; it answers “how.” Understanding answers “why.” Wisdom is evaluated understanding; it answers “what is best to do.” Ackoff believed wisdom would probably never be generated by machines, and while that judgment was made decades before large language models, his structural distinction holds. AI processes data into information at superhuman speed. It applies knowledge patterns with increasing sophistication. But the understanding of why a particular specification matters, and the wisdom to judge what is the right thing to build, remain human capacities that no model release has displaced.

This reframes the specification gap. The human must supply the understanding and wisdom; the “why” and the “what is best”; in a form precise enough to constrain the machine’s knowledge-level processing. That is what a specification is: the bridge between human wisdom and machine capability. Without it, the machine operates at the knowledge level, generating technically competent output that may have nothing to do with what the organisation actually needs.

The organisations that get clarity on what to do with AI will be the ones that stop solving problems and start dissolving them. They will stop asking “where can we add AI?” and start asking “what would we build if we could start again?” They will stop optimising the wrong thing and start, at last, doing the right thing badly enough to learn.

An Organisational Prompt

(An Organisational Prompt is something you can do now, in your organisation, to put the ideas in this article to work.)

Ask the Destruction Question

In your next leadership meeting, try this: “If our department were dissolved overnight and we had to rebuild it from scratch with today’s technology, what would we build?” Give people ten minutes to write their answers privately before anyone speaks. Compare the answers with what you are actually doing. The gap is your mess.

Further Reading

Russell Ackoff

Idealized Design: How to Dissolve Tomorrow’s Crisis... Today - The practical guide to idealised design, with worked examples.

Creating the Corporate Future: Plan or Be Planned For - Interactive planning applied to corporate strategy. Where the four orientations to planning are developed.

The Art of Problem Solving: Accompanied by Ackoff’s Fables - The most accessible introduction to Ackoff’s thinking, illustrated with case studies.

Ackoff’s Best: His Classic Writings on Management - A curated collection of his most important papers.

A Brief Guide to Interactive Planning and Idealized Design - Concise overview of the methodology. Freely available PDF.

From Mechanistic to Social Systemic Thinking - The analysis vs synthesis argument in full. Freely accessible.

A Lifetime of Systems Thinking - Career retrospective. Freely accessible.

I write about the industry and its approach in general. None of the opinions or examples in my articles necessarily relate to present or past employers. I draw on conversations with many practitioners and all views are my own.