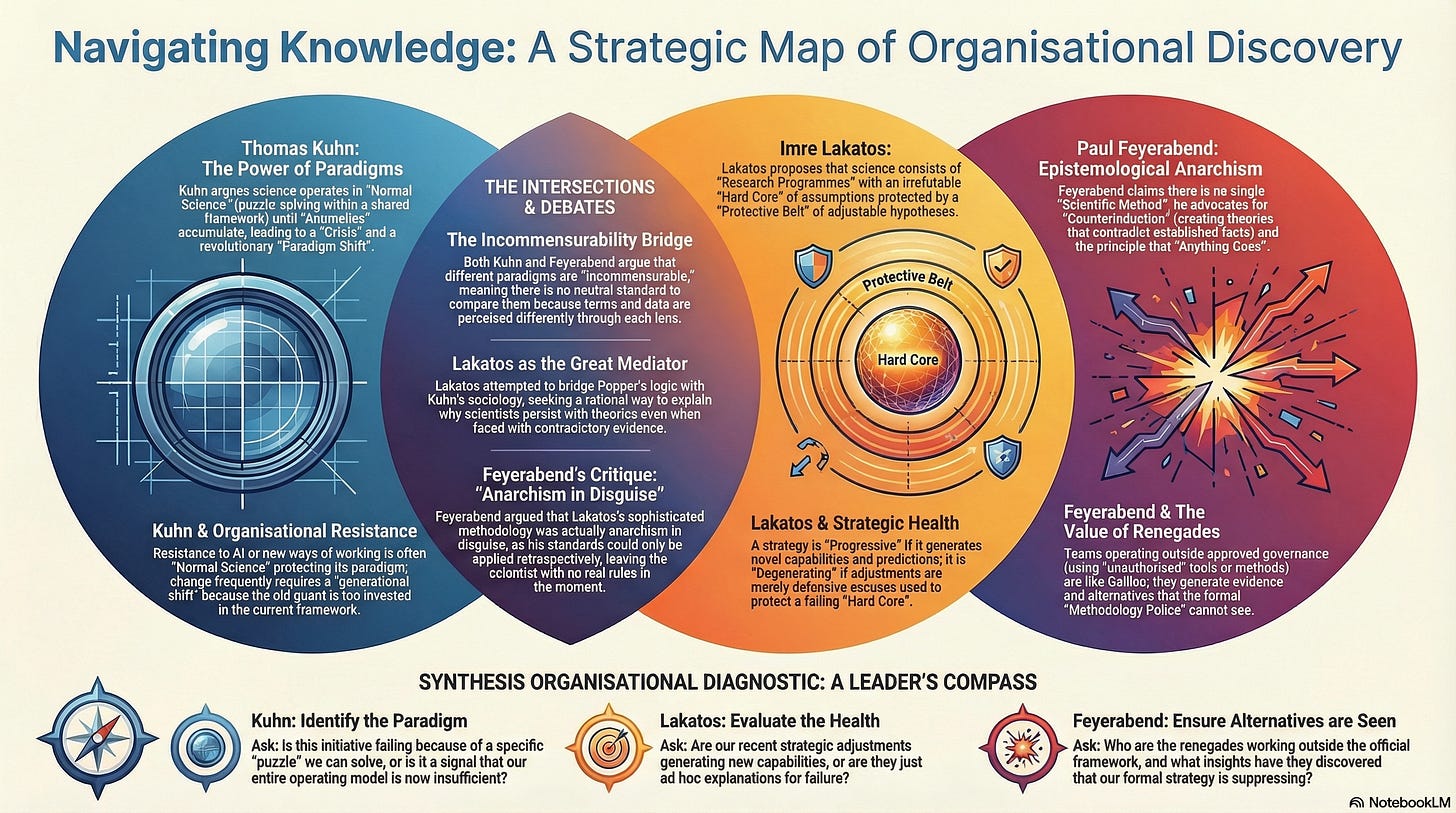

Organisational Lessons from Scientific Discovery

Why Kuhn, Lakatos, and Feyerabend Explain How Organisations Actually Adopt What They Have Learnt.

Your organisation has a team, maybe on on the fourth floor, that has been quietly using Claude to rewrite their entire app, without approval, without the approved vendor. They are six months ahead of the official programme. The team is not sure whether to be proud or frightened.

This is not a compliance problem. It is a discovery problem. The official programme and the renegade team are both trying to learn what AI can do for the organisation. They are using fundamentally different methods. And the question of which one is producing better knowledge, and why, is not a question that governance frameworks are equipped to answer. It is a question that three philosophers of science spent the second half of the twentieth century arguing about, and their argument has more to say about your AI transformation than any maturity model on the market.

Thomas Kuhn, Imre Lakatos, and Paul Feyerabend are best understood as a conversation, not as three independent positions. Kuhn published The Structure of Scientific Revolutions in 1962, arguing that science advances not by steady accumulation but through revolutionary ruptures between incommensurable paradigms. Lakatos responded in 1970 with a framework for evaluating whether a research programme is genuinely progressive or merely defending itself. Feyerabend published Against Method in 1975, arguing that every methodological rule, however plausible, has been productively violated at some point in the history of science.

The three of them, together with Karl Popper (discussed previously) who chaired the pivotal 1965 London colloquium at which much of this was debated, produced between them the most penetrating account available of how communities of practitioners actually discover new things, adopt new practices, and resist the evidence that their current approach has stopped working.

Compressing three deeply original thinkers into a single article necessarily simplifies positions that each spent decades refining. Kuhn would insist that his concept of incommensurability is more nuanced than “paradigm shift” has become in popular usage; he later preferred the term “exemplar” and tried repeatedly to clarify what he actually meant. Lakatos would protest that his methodology of scientific research programmes deserves independent treatment; it is, after all, a sophisticated attempt to rescue rationality from Kuhn’s apparent irrationalism. Feyerabend would probably object to being included in any series that claimed to offer methodological guidance at all; he spent his career attacking precisely that enterprise. What follows treats the three as a conversation about how discovery actually works, and asks what that conversation means for organisations trying to decide what to do next.

1. Kuhn: Why Your Organisation Resists the Evidence That Its Paradigm Has Failed

Thomas Samuel Kuhn (1922-1996) was a physicist turned historian of science whose single book changed the vocabulary of intellectual life. Born in Cincinnati, he completed his PhD in physics at Harvard in 1949, then experienced a revelatory moment while teaching Aristotle’s physics to non-scientists: he realised that Aristotle was not simply wrong, but was operating within a different framework of understanding. This insight, that scientific communities work within shared frameworks that determine what counts as a good question, a good method, and a good answer, became the foundation of The Structure of Scientific Revolutions.

Kuhn’s model is deceptively simple. Science does not progress by the steady accumulation of facts. It proceeds through a cycle: long periods of normal science, punctuated by revolutionary ruptures that replace one paradigm with another.

A paradigm, properly understood, is not just a theory. It is a constellation of shared commitments: theoretical assumptions, accepted methods, standard instruments, exemplary problem-solutions, and criteria for what counts as a legitimate question. Within a paradigm, scientists engage in puzzle-solving: extending the framework, filling in details, resolving small discrepancies. Normal science is conservative, cumulative, and enormously productive. It is productive precisely because it is focused: scientists are not debating fundamentals; they are solving specific problems with shared tools.

Anomalies are puzzles that resist solution. Initially, they are absorbed: explained away, delegated to specialists, or filed as “known issues” for future attention. This is not stupidity. It is rational behaviour within a paradigm, because most anomalies are eventually resolved within the existing framework. The scientist who abandons a productive paradigm at the first counter-instance is not bold; they are flighty. The paradigm has earned its loyalty through decades of accumulated success.

Crisis occurs when anomalies accumulate to a point where confidence in the paradigm erodes. The established practitioners may continue to defend the framework, but younger scientists or outsiders begin taking the anomalies seriously. Revolution occurs when a new paradigm emerges that resolves the persistent problems. The shift is not purely rational: it involves social, psychological, and generational factors. Different paradigms may be incommensurable: there is no neutral, paradigm-independent standard by which to compare them. Scientists in different paradigms may literally interpret the same data differently, because the paradigm determines what the data means.

After the revolution, science rewrites its own history. Textbooks present the transition as inevitable progress, smoothing the rupture into a narrative of cumulative development. The resistance, the political battles, the careers that were destroyed and the careers that were made are all erased. The next revolution will face the same dynamics, but the institutional memory of how the last one actually happened will have been lost.

The organisational parallels are immediate.

Normal science is what Argyris calls single-loop learning: operating within existing assumptions, solving puzzles defined by the current framework. Most organisational activity is normal science, and it should be. The team that processes invoices, manages the deployment pipeline, or handles customer support is doing productive puzzle-solving within an established paradigm. This is not a failure. It is how organisations get things done.

The problem begins when anomalies appear. In AI transformation, the anomalies are everywhere: use cases that do not fit the existing operating model, value propositions that do not materialise under the current governance structure, skills gaps that training does not close, teams that produce better results by ignoring the approved methodology than by following it. The organisational response to these anomalies is exactly what Kuhn described for science. They are absorbed. “The tool wasn’t the right one.” “The team wasn’t ready.” “We moved too fast.” “We need more governance.” Each explanation protects the paradigm from the implication that the paradigm itself might be the problem.

Dweck illuminates the individual response. In a growth mindset, the struggle of learning new tools and methods is experienced as the normal sensation of learning. In a fixed mindset, the same struggle is evidence of inadequacy. The engineers most resistant to AI-augmented development are often those whose identity and career capital are most deeply invested in the current paradigm. This is not irrationality. It is the entirely predictable response of a person whose sense of professional competence is being challenged by a paradigm shift they did not ask for and cannot yet see the other side of.

And Weick explains what happens when the paradigm actually breaks down. His analysis of the Mann Gulch disaster is Kuhn’s crisis played out in twelve minutes: when the smokejumpers’ framework collapsed, when a routine fire became something they could not interpret, they could not make sense of their foreman’s escape fire because the identity and role structures that made interpretation possible had dissolved. They dropped into individual flight because the social structure that held them together as a team had collapsed. This is what a cosmology episode looks like, and it is what the early stages of a genuine paradigm shift feel like from the inside: not exciting, but terrifying.

Kuhn’s deepest insight for this series is about the generational dimension. The people who built the current paradigm have the deepest investment in it. They are the least likely to see anomalies as signals of paradigmatic failure rather than local problems. Max Planck is often credited with the observation that science progresses one funeral at a time; Kuhn would not have been so blunt, but his analysis supports it.

2. Lakatos: How to Tell Whether Your AI Programme Is Progressing or Dying

Imre Lakatos (1922-1974) had one of the most extraordinary lives of any philosopher in the twentieth century. Born Imre Lipschitz in Debrecen, Hungary, he survived the Holocaust by adopting false names; his mother and grandmother perished in Auschwitz. After the war, he became a high-ranking official in the Hungarian Ministry of Education, then was imprisoned during the Stalinist purges from 1950 to 1953. He fled Hungary after the 1956 Soviet crackdown, completed a PhD at Cambridge, and joined the London School of Economics, where he became Popper’s most important student and eventually his most sophisticated critic. He died suddenly of a brain haemorrhage in 1974, at fifty-one. The planned companion volume to Feyerabend’s Against Method, which Lakatos was to write as “For Method,” was never completed.

Lakatos saw two unacceptable positions in the philosophy of science, and he saw them as having direct political implications. Popper’s naive falsificationism demanded that scientists discard a theory at the first counter-instance, which bore no resemblance to how science actually worked and would have destroyed most of its greatest achievements. Kuhn’s account, in which paradigm shifts are determined by social psychology rather than logic, was worse: if even in science there is no rational basis for choosing between theories, Lakatos argued, then in politics “truth lies in power.” He called Kuhn’s position “mob psychology” and saw it as intellectually dangerous. Having survived both Nazism and Stalinism, Lakatos was not inclined to be relaxed about the relationship between rationality and power.

His solution was the methodology of scientific research programmes. The unit of scientific evaluation, Lakatos argued, is neither the individual theory (Popper) nor the monolithic paradigm (Kuhn), but the research programme: a sequence of theories that share a common hard core.

The hard core is the set of fundamental assumptions that define the programme. Scientists treat these as irrefutable by methodological decision; they do not abandon them in the face of counter-evidence. Newton’s three laws of motion formed the hard core of Newtonian physics. The hard core of Agile is something like: iterative delivery, self-organising teams, customer collaboration, and responding to change over following a plan. The hard core of some enterprises’ AI strategy, if you excavate it, is something like: “AI will automate existing processes and reduce headcount costs.”

The protective belt is everything else: auxiliary hypotheses, implementation details, specific practices, tooling choices, team structures, governance frameworks. When a prediction fails, the scientist adjusts the protective belt, not the hard core. This is rational behaviour, not intellectual cowardice. Most failures are in the protective belt: the implementation was wrong, the timing was off, the team lacked the right skills, the measurement was inadequate. Adjusting the protective belt while preserving the hard core is how research programmes develop.

The question is whether the adjustments are progressive or degenerating.

A progressive research programme is one where successive adjustments generate novel predictions that are at least occasionally confirmed. Each iteration opens new territory. The programme is learning: not just defending itself, but discovering things it did not know before. A degenerating programme is one where successive adjustments serve only to protect the hard core from refutation, without predicting anything new. The modifications are purely defensive. The programme explains what has already happened but generates no novel capability.

This distinction is the most practically useful concept in this entire article, and it maps directly onto how organisations should evaluate their AI programmes.Is this programme progressive or degenerating?

The answer depends on a single question: are the adjustments generating novel capability, or are they merely explaining each successive failure?

If the specification template produced genuine clarity about what the system should do, if the data quality initiative revealed structural problems in how the organisation manages its information, if the knowledge capture exercise created shared understanding of business rules that had previously been tacit, then the programme is progressive. The failures were productive. Each adjustment opened new territory. The organisation now knows things it did not know before, and those things are useful beyond the original AI testing initiative.

If the specification template was filled in mechanically without improving actual clarity, if the data quality initiative produced a report that nobody acted on, if the knowledge capture exercise generated documents that live in a SharePoint graveyard, then the programme is degenerating. Each adjustment explained the last failure without generating anything new. The hard core (”AI automates testing”) was protected from refutation, but the organisation learnt nothing.

The warning sign of a degenerating programme is unmistakable once you know what to look for: the primary output of the programme is strategic narrative rather than deployed capability. Decks, frameworks, maturity models, roadmaps, governance structures, risk assessments, and vendor evaluations proliferate. The steering committee meetings are full of activity reports. But the actual novel capability, the thing the organisation can do now that it could not do before, is absent.

Lakatos also explains why “fail fast” is too simple a prescription. Sometimes a programme is in what Lakatos called a “bad patch”: a period where no empirical progress is visible, but where the theoretical development, the deepening of understanding, the refinement of the model, is setting the stage for a breakthrough. Abandoning a programme at the first difficulty is Popperian naivety; the programme may be doing important work that has not yet yielded visible results. The art, and it is an art rather than a science, is distinguishing a bad patch from terminal degeneration. Lakatos provided no algorithm for this. He acknowledged that the judgement is retrospective and fallible: you can only know with confidence whether a programme was progressive or degenerating after the fact. In real time, the leader must make a judgement call.

This connects directly to Heifetz. A technical challenge, in Heifetz’s terms, is a problem in the protective belt: the implementation needs adjusting, but the fundamental direction is sound. An adaptive challenge requires changing the hard core: the assumptions that define the programme are no longer adequate. The most common leadership failure that Heifetz diagnoses, treating adaptive challenges as technical problems, is in Lakatosian terms the error of endlessly adjusting the protective belt when the hard core itself needs to change. The leader who responds to repeated AI pilot failures by commissioning yet another governance review is making protective belt adjustments to a programme whose hard core may be wrong.

3. Feyerabend: Why the Renegades Are Your Best Source of Evidence

Paul Karl Feyerabend (1924-1994) is the most misunderstood philosopher in this article, and possibly the most important. Born in Vienna, he served in the German army during the Second World War and was wounded on the Eastern Front in 1943 by three bullets, one of which struck his spine. He used a walking stick and lived in pain for the rest of his life. He studied physics and philosophy in Vienna, initially planned to study with Wittgenstein at Cambridge, but Wittgenstein died before Feyerabend arrived. He studied instead with Popper at the London School of Economics and began as a committed Popperian falsificationist, before spending the next two decades dismantling the position from within.

His career was spent mostly at UC Berkeley, where he was a legendary lecturer: provocative, funny, and deeply serious beneath the provocation. Against Method (1975) made him the most controversial philosopher of science of his generation. He was labelled “the worst enemy of science,” a characterisation he both resented and cultivated. His close friendship with Lakatos, documented in their extraordinary correspondence (published posthumously as For and Against Method), reveals a thinker far more nuanced than the popular image of the “anything goes” anarchist. He later said of Against Method: “I often wished I had never written that fucking book.” He felt his irony and playfulness were systematically misread as relativism.

Feyerabend’s central argument is not that science has no method, or that all methods are equally good, or that evidence does not matter. His argument is that there is no single scientific method whose rules are universally valid. Every methodological rule, however plausible, has been productively violated at some point in the history of science. The conclusion is not that rules are useless, but that the imposition of fixed methodological rules constrains discovery rather than enabling it. The German title of the book, Wider den Methodenzwang, translates as “Against the Forced Constraint of Method.” The target is not method itself but methodological tyranny.

His primary case study is Galileo’s defence of heliocentrism. Galileo did not follow the accepted methodological rules of his time. He used propaganda and rhetoric alongside evidence. He introduced the telescope as an instrument of observation when the telescope’s reliability was itself unproven. He advanced a theory that contradicted the well-established evidence of the senses: the earth does not appear to move. By the methodological standards of his day, Galileo was doing bad science. By the standards of history, he was doing the most important science of his century.

Feyerabend draws two principles from this and similar cases.

First, counterinduction: deliberately developing hypotheses that contradict well-confirmed theories and well-established results. The consistency condition, which demands that new ideas agree with accepted theories, is unreasonable because it preserves the older theory, not the better theory. It protects incumbency, not truth. Hypotheses that contradict established thinking generate evidence that no amount of work within the existing framework can produce. You cannot discover what is wrong with the paradigm by working within it.

Second, proliferation: maintaining multiple competing approaches rather than converging on a single methodology. Competing theories illuminate each other’s weaknesses in ways that no single theory, however well-tested, can reveal about itself. A monopoly of method is as dangerous as a monopoly of power.

This is not relativism. Feyerabend is not saying all methods are equally good. He is saying that you cannot know in advance which method will produce the breakthrough, and that the premature elimination of alternatives is the most reliable way to prevent discovery. The proliferation of approaches creates the conditions for informed comparison. The governance framework that insists on a single approved methodology eliminates those conditions.

The organisational implications cut deep, and they cut in two directions simultaneously.

The first direction extends Tom Peters’ argument against bureaucracy. The AI governance framework, the Centre of Excellence, the approved vendor list, the mandated methodology: these are not neutral quality controls. They are the organisational equivalent of Feyerabend’s methodological constraint. They determine what approaches are permitted, what evidence is recognised, and which voices are heard. They protect the incumbent paradigm and suppress alternatives. The team on the fourth floor that is using Claude without approval is doing what Galileo did with his telescope: generating evidence that the formal process cannot produce, using tools whose value the establishment has not yet recognised, and getting results that embarrass the official programme.

Stacey would agree entirely. Real organisational learning happens in the informal, the unplanned, the conversations that governance does not capture. The teams quietly using AI outside the approved framework are not insubordinate. They are the emergent strategy trying to tell you where the value is.

The second direction provides the essential corrective. Feyerabend’s proliferation must eventually produce evidence. “Anything goes” at the level of exploration does not mean “anything goes” at the level of evaluation. The renegade team’s results must be assessed. The alternative approaches must be compared. The organisation cannot remain permanently in a state of methodological anarchy; at some point, it must converge. The question is when, and on what basis, and Feyerabend provides less guidance on this than the practitioner needs.

This is where this series’ section emphasis on getting to clarity and specification-driven development provides the discipline that Feyerabend’s anarchism requires but does not supply. Popper’s insistence on falsifiable conjectures, Drucker’s insistence on defining the task, Evans’ insistence on ubiquitous language within bounded contexts: these are the mechanisms by which exploratory freedom is converted into evaluable results. The organisation that gives teams freedom to experiment must also insist that experiments produce testable specifications, that results are measured against those specifications, and that the comparison between approaches is honest. Freedom without evaluation is waste. Evaluation without freedom is stagnation. The synthesis requires both.

4. The Integrated Diagnostic: How the Three Questions Work Together

Each thinker provides a different lens for the same organisational challenge, and the three lenses compose into a single diagnostic.

Kuhn asks: what is your paradigm, and is it in crisis? The paradigm is the set of assumptions, methods, and standards that govern how your organisation currently operates. Most of the time, normal operations within the paradigm are productive and should not be disrupted. The question is whether the anomalies accumulating around your AI transformation, the failures, the surprises, the results that do not match the predictions, are local problems within the paradigm or signals that the paradigm itself has stopped working. If every AI pilot that succeeds does so by violating the approved methodology, the anomaly is not in the pilot. It is in the methodology.

Lakatos asks: is your programme progressive or degenerating? Identify the hard core of your AI strategy: the assumptions you treat as irrefutable. Then examine the adjustments you have made in the last twelve months. For each adjustment, ask the diagnostic question: did this generate a novel capability, or did it merely explain the previous failure? If the adjustments are opening new territory, the programme is progressive, and persistence is rational. If the adjustments are purely defensive, the programme is degenerating, and the rational response is not to adjust the protective belt again but to question whether the hard core is wrong.

Feyerabend asks: are you allowing yourself to see the alternatives? In every organisation, someone is solving the problem differently. They may be using unapproved tools, ignoring the governance framework, or pursuing an approach that the strategy deck does not mention. The question is whether the organisation treats these people as threats or as sources of information. If the response to unsanctioned success is governance enforcement, the organisation is practising methodological constraint: protecting the official programme from the evidence that would reveal its limitations. If the response is curiosity and investigation, the organisation is practising the pluralism that discovery requires.

The three questions are not independent. They are three perspectives on a single phenomenon: the relationship between an established way of working and the evidence that it needs to change. Kuhn explains why the established way is so resistant to evidence. Lakatos provides criteria for evaluating whether the resistance is rational persistence or degenerate defence. Feyerabend insists that the evidence itself is being shaped by which methods the organisation permits.

Together, they reveal the deepest pattern in transformation failure. The organisation launches a programme with a hard core that reflects the current paradigm. Anomalies appear. The protective belt is adjusted. The adjustments explain each failure without generating novel capability. The programme degenerates. Meanwhile, the renegades operating outside the programme are generating genuine evidence, which the governance framework prevents the programme from seeing. The programme responds to its own degeneration by producing more governance, more methodology, more strategic narrative: exactly the methodological constraint that Feyerabend identifies as the primary obstacle to discovery. The organisation fails not because it lacked a method, but because it refused to examine what its method was preventing it from learning.

Kahneman would add the cognitive dimension: the leader’s System 1 generates a coherent narrative that makes the degeneration invisible. The programme feels productive because it is producing things: reports, frameworks, governance structures. Confidence feels like evidence. The losses entailed by admitting that the hard core is wrong, the sunk costs, the careers invested, the reputations attached, loom larger than the gains from changing direction. This is loss aversion in its purest institutional form, and it is why Heifetz insists that the most important thing a leader can do is name the loss: to say, clearly and compassionately, that the current approach is not working, that changing direction will cost something real, and that the cost of not changing is higher.

5. The Generational Problem and the Question of Adoption

There is one more dimension of this argument that organisations routinely ignore, and it may be the most consequential.

Kuhn observed that paradigm shifts often require a generational transition. The practitioners whose careers were built within the old paradigm frequently do not convert to the new one. They retire, they are sidelined, or they continue to practise in the old mode within diminishing enclaves. The new paradigm is adopted primarily by younger practitioners who were trained within it, or by outsiders who have no investment in the old one.

This observation is profoundly uncomfortable for anyone leading organisational transformation, because it implies that some of the people you are asking to change will not change. Not because they are stupid, lazy, or resistant. Because the paradigm is not just something they believe; it is the structure of their professional identity, their sense of competence, their understanding of what makes them valuable. We have discussed this at length through Giddens, Bourdieu etc.

Seligman’s learned helplessness adds the historical dimension. In organisations with a long history of failed change programmes, the veteran practitioners have learnt through repeated experience that resistance outlasts the initiative. They have survived Agile. They have survived DevOps. They have survived cloud transformation. Each time, the language changed but the structures persisted. Their reasonable expectation is that AI transformation will follow the same pattern: absorb the tools, preserve the structures, capture the terminology, and wait for the next initiative. The learned helplessness is rational. It is based on accurate observation of institutional history. And it is the single most powerful predictor of transformation failure.

The Feyerabendian response to this problem is not to force the conversion, but to ensure that the alternative approaches are visible and productive. The team on the fourth floor does not need to convince the established practitioners that AI-augmented development is superior. They need to produce results that make the superiority visible.

Bandura’s research on self-efficacy confirms this: the most powerful source of belief in one’s own capabilities is mastery experience, actually doing the thing and succeeding. A live demonstration where a sceptical engineer watches AI generate working code from a well-written specification is a mastery experience. It bypasses intellectual debate entirely. It creates the possibility of conversion not through argument but through evidence.

But the evidence must be allowed to exist. And this returns us to Feyerabend’s central point: the governance framework that prevents the team on the fourth floor from producing their results is not protecting quality. It is preventing the evidence that would force the paradigm to change.

(An Organisational Prompt is something you can do now...)

Organisational Prompt

Kuhn, Lakatos, and Feyerabend each provide a different diagnostic lens for the same challenge: how do you know whether your transformation is actually working, or whether you are protecting a degenerating programme with increasingly elaborate defensive adjustments? Lakatos’ test on generative programmes is helpful.

List the last three adjustments made to the initiative: changes in scope, tooling, team, governance, vendor, or approach. Ask, did this adjustment generate a new capability we did not have before, or did it merely explain the previous failure? Is the programme degenerating?

Further Reading

Thomas Kuhn: The Structure of Scientific Revolutions - The book that changed how we think about how science changes. Read the Postscript to the second edition as carefully as the text itself; it is where Kuhn clarifies what he actually meant by “paradigm,” and it is considerably more nuanced than the popular usage suggests.

Thomas Kuhn: The Essential Tension - The title essay argues that science requires a productive tension between tradition and innovation. Read it alongside Mintzberg on the relationship between craft and analysis, and alongside the series’ recurring argument that neither permanent revolution nor permanent normal science is adequate.

Imre Lakatos and Alan Musgrave (eds.): Criticism and the Growth of Knowledge - The proceedings of the 1965 London colloquium. Contains Kuhn’s statement, Lakatos’s response, Feyerabend’s critique, and Kuhn’s reply. This is the volume where the debate actually happened, and reading it is like watching four of the twentieth century’s most formidable minds argue about the foundations of rational inquiry. Essential for anyone who wants to understand the positions rather than the caricatures.

Imre Lakatos: The Methodology of Scientific Research Programmes: Philosophical Papers Volume 1 - Contains “Falsification and the Methodology of Scientific Research Programmes,” the essay that introduced the hard core, the protective belt, and the progressive/degenerating distinction. Dense but rewarding, and the most practically useful framework in the philosophy of science for anyone evaluating a strategic programme.

Paul Feyerabend: Against Method - Read the Galileo chapters closely and the rest for the argument’s texture. Read the German subtitle (”Against the Forced Constraint of Method”) as a corrective to the popular misreading. Feyerabend is not against method. He is against the imposition of a single method on everyone, and his case is stronger than most of his critics have acknowledged.

Matteo Motterlini (ed.): For and Against Method: The Lakatos-Feyerabend Correspondence - The letters between Lakatos and Feyerabend reveal a friendship of extraordinary intellectual intensity, warmth, and mutual respect. Reading them dispels the myth of Feyerabend as an irresponsible provocateur and reveals Lakatos as a more conflicted thinker than his published work suggests.

Disclaimer

I write about the industry and its approach in general. None of the opinions or examples in my articles necessarily relate to present or past employers. I draw on conversations with many practitioners and all views are my own.