Chris Argyris: The Trap of “Skilled Incompetence"

Chris Argyris revealed the mechanism by which successful professionals systematically prevent their own learning.

Your organisation says it is committed to AI transformation. It has published the strategy. It has funded the centre of excellence. It has hired the head of AI. It has sent senior leaders on courses and launched pilot programmes. And nothing fundamental is changing. The people closest to the work can see this. They discuss it in hallways, in private messages, over coffee. They do not discuss it in the meetings where it might make a difference. The gap between what is said in public and what is said in private is the gap where learning dies, and Chris Argyris spent forty years explaining why that gap exists and why it is so resistant to being closed.

Argyris studied something more elemental than strategy, structure, or process: how people actually reason when they feel threatened. What he found is devastating for anyone leading transformation. The more successful and senior the professional, the worse they are at learning. Not because they lack intelligence, but because their entire career has trained them to avoid the conditions that learning requires. Their competence at avoiding learning is itself a highly developed skill, refined through decades of practice and rewarded by every organisation they have worked in. Argyris called it skilled incompetence, and it is the most important barrier to organisational change that almost nobody can see, because the skill includes the ability to not see it.

1. Single-Loop and Double-Loop Learning

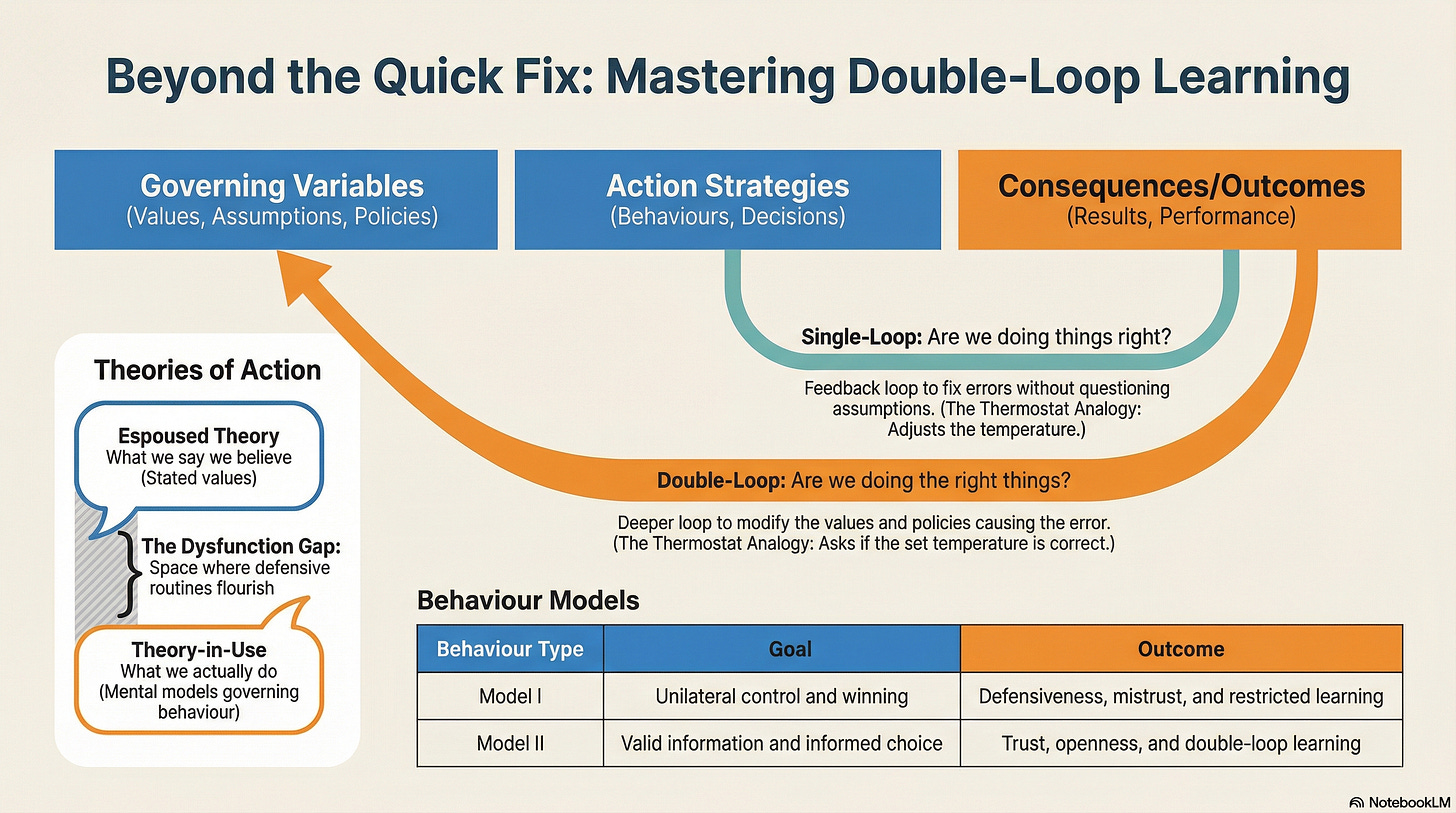

Argyris’s most enduring contribution is the distinction between single-loop and double-loop learning. Single-loop learning detects and corrects errors within existing assumptions; like a thermostat that adjusts the heating to maintain a set temperature. It never asks whether the temperature setting itself is right. Double-loop learning questions the assumptions: is this the right temperature? Should we even be heating this room? Is the room the right shape?

Most organisations are single-loop machines. They optimise relentlessly within a framework they never examine. When AI-generated code arrives and works, single-loop learning asks: “How do we govern this? How do we test it? How do we make sure it meets our standards?” These are important questions. They are also the wrong first questions, because they assume the existing framework remains valid. Double-loop learning would ask: if code can be generated from specifications, do we still need the distinction between requirements and development? Do we still need the team structures built around that distinction? Is our entire engineering operating model based on an assumption that code is the bottleneck, and is that assumption still true?

Bateson provides the epistemological architecture beneath this distinction. His hierarchy of learning levels maps precisely: Learning I is correction of errors within a fixed frame (single-loop). Learning II is questioning the frame itself (double-loop). Most organisations are structurally locked at Learning I, and every thinker in this series diagnoses a different mechanism by which that lock is maintained. Argyris identifies the most fundamental: the people within the organisation are skilled at preventing Learning II from occurring, and their skill operates below the threshold of their own awareness.

2. Espoused Theory and Theory-in-Use

Argyris drew a sharp distinction between two kinds of theory that govern behaviour. Espoused theory is what people say they believe: the values they articulate, the principles they put on slides, the culture they describe in town halls. Theory-in-use is what actually governs their behaviour: the mental models and decision rules that operate in practice, often without conscious awareness.

The gap between the two is where organisational dysfunction lives. Watch it play out in AI adoption. The espoused theory: “We encourage experimentation. We welcome innovation.” The theory-in-use: “We will adopt AI in ways that do not threaten any existing structure, hierarchy, or career path.” These two positions are fundamentally incompatible. And the inability to discuss this incompatibility is the primary obstacle to organisational learning.

This is not hypocrisy. The people who espouse openness to change genuinely believe they mean it. Argyris’s insight is that theories-in-use operate below conscious awareness. A senior technology leader who says “we embrace AI” and simultaneously requires every AI-generated artefact to pass through the same governance process designed for handwritten code is not lying. They are enacting a theory-in-use, “change must not disrupt the structures I control,” that they cannot see because seeing it would require questioning their own role. Kahneman provides the cognitive mechanism: System 1 constructs a coherent story (”we are transforming”) from the available evidence and suppresses awareness of contradictions. WYSIATI means the leader literally cannot see the gap. The story is too coherent, and coherence feels like truth.

3. Defensive Routines and the Double Bind

Argyris discovered that organisations develop defensive routines: patterns of behaviour that prevent embarrassment or threat but block learning. These routines have a characteristic property; they are self-sealing. The routine itself cannot be discussed, and the fact that it cannot be discussed also cannot be discussed.

Consider the senior architect who has spent twenty years building distributed systems. AI-generated code arrives and works. It does not handle every edge case. It does not meet every governance requirement. The architect points all of this out, correctly and accurately. But the valid criticism functions as a defensive routine: it prevents the deeper question from being asked. If AI can generate 80% of the code this architect currently writes, what is their role now? That question is undiscussable. And the fact that it is undiscussable is also undiscussable. Anyone who raises it will be met not with argument but with deflection.

Bateson’s double bind theory illuminates why these routines are so resistant to change. A double bind occurs when someone receives contradictory messages at different logical levels and cannot comment on the contradiction. “We encourage honest feedback” delivered in a meeting where honest feedback has historically produced negative consequences is a double bind. The person cannot respond to the explicit message without ignoring the implicit one, and cannot comment on the contradiction without violating the implicit message. The result is paralysis disguised as compliance. Argyris describes the behaviour; Bateson explains the epistemological mechanism. The defensive routine is the person’s rational response to a communication structure that makes honesty structurally impossible.

Bourdieu adds the embodied dimension. Defensive routines are not merely conversational habits that can be changed through training. They are part of the habitus: the accumulated professional dispositions that generate practice below the level of conscious awareness. The architect who deflects the identity-threatening question is not making a strategic choice. Their habitus is producing the only response it knows how to produce. The dispositions were formed through twenty years of operating in organisations that rewarded expertise and punished uncertainty. You cannot instruct someone out of this. You can only create the conditions for new practice to form new dispositions.

4. Model I and Model II

Argyris mapped these patterns into two models of behaviour. Model I, the default for almost everyone, is governed by four values: maintain unilateral control, maximise winning and minimise losing, suppress negative feelings, and be rational. These sound reasonable. They are a recipe for organisational paralysis.

Under Model I, a leader confronted with evidence that their AI strategy is failing will reinterpret the evidence (”it is too early to judge”), blame external factors (”the vendor did not deliver”), suppress the emotional reality (”morale is fine, people just need time”), and reframe rationally (”we are on track against the revised milestones”). At no point do they question the governing values that produced the strategy. This is single-loop reasoning in action.

Model II operates under different governing values: use valid information as the basis for action, ensure free and informed choice, and generate internal commitment to decisions. Under Model II, the same leader would share the evidence of failure openly, invite genuine challenge to the strategy, acknowledge the emotional toll on the team, and create conditions where people could disagree without career risk.

Model II is rare because it requires vulnerability. It requires the leader to say “I may be wrong” in a culture that rewards certainty, to surface conflict in a culture that rewards harmony, and to admit that they do not know the answer in a culture that promotes people who appear to know everything. Stacey would add that Model II is not just an individual behaviour; it is an interaction pattern. When a leader operates in Model II, they change the interaction from which meaning emerges. They make a gesture that calls forth a different kind of response. But the gesture is risky precisely because the response cannot be predicted, and Model I exists to eliminate exactly that kind of risk.

5. Skilled Incompetence: Why the Best People Are the Worst at This

Argyris coined the term skilled incompetence to describe what happens when highly capable professionals apply their considerable skills to avoiding learning. They are not incompetent in the ordinary sense. They are extraordinarily competent at behaviours that prevent the organisation from learning. They smooth over conflict before it can produce insight. They send mixed messages to avoid commitment. They design meetings that produce the appearance of agreement without genuine alignment.

This matters because the people most central to AI transformation, senior engineers, architects, domain experts, technical leaders, are exactly the people who have spent the longest perfecting these skills. They have risen precisely because they learned how to navigate organisations without exposing themselves to situations where they might fail publicly. And now AI adoption demands exactly what they have spent their careers avoiding: admitting that their existing expertise, while still valuable, is no longer sufficient. That the frameworks they have mastered need revision. That the roles they have built may need to change.

Bourdieu explains why this is not a character flaw but a structural condition. The habitus of the senior professional was formed in a field that rewarded certain dispositions: command of technical detail, confidence in recommendations, the ability to close down ambiguity. These dispositions generated the cultural capital that produced career advancement. AI transformation shifts the field: the dispositions that were rewarded are no longer sufficient, and the capital they generated is being devalued. Skilled incompetence is not resistance to change. It is the habitus defending the capital it accumulated under the old rules of the game. It is entirely rational, and it is almost entirely invisible to the person it governs.

6. Creating the Conditions

Argyris was not merely a diagnostician. He spent decades working with organisations to develop the capacity for double-loop learning. The core requirement is deceptively simple: people must learn to make their reasoning explicit and genuinely testable. Not “I think X” but “I think X because of Y and Z, and here is the evidence that would change my mind.”

This is Model II in practice, and it cannot be taught through lectures or training programmes. It requires leaders to model the behaviour themselves. If the head of AI cannot say “I was wrong about our approach to specification governance, and here is what I learned,” then no amount of psychological safety workshops will produce double-loop learning in the organisation. The signal that matters is not what the organisation says about learning. It is what happens to the first person who publicly admits they were wrong.

Dekker’s just culture becomes essential here. If the response to honest admission of error is blame, however subtly expressed, then Model I is the only rational choice. Argyris and Dekker converge from different directions: you cannot mandate learning. You can only create the conditions in which learning becomes safe enough to attempt. Seligman adds a further dimension: when people have experienced repeated failures of organisational learning, when they have raised uncomfortable truths before and been punished for it, they develop learned helplessness and stop trying. Not because they lack insight, but because experience has taught them that insight is not rewarded. The defensive routines win not because they are strong, but because the people who might challenge them have been trained, through repeated experience, that challenge is futile.

Heifetz names the leader’s task with precision: hold the distress. Do not resolve it prematurely by providing the answer. Do not let it overwhelm by ignoring the emotional cost. Create the space in which the discomfort of examining one’s own reasoning can be tolerated long enough for genuine learning to occur. This is the adaptive challenge at the heart of every transformation: the people with the problem are the problem, and the leader is among those people.

(An Organisational Prompt is something you can do now....)

Organisational Prompt

Pick one statement your organisation makes about its transformation: “We encourage experimentation,” “We are committed to change,” “We value innovation.” Now find one concrete example where the organisation’s actual behaviour contradicts that statement. Write both down side by side.

Show them to a trusted colleague and ask: “Can we discuss this contradiction in a leadership meeting?” If the answer is no, or if you already know the answer is no without needing to ask, you have found a defensive routine. That routine is more important than your strategy, because it determines whether your strategy can learn. The gap between the espoused theory and the theory-in-use is the precise location of the obstacle. Everything else is downstream.

Further Reading

Chris Argyris, Teaching Smart People How to Learn (Harvard Business Review, 1991). The single most important article on why success-oriented professionals resist learning. Read this before anything else if you are short on time.

Chris Argyris, Overcoming Organizational Defenses (1990). The most accessible entry point to Argyris’s thinking. Short, direct, and full of examples that will make you uncomfortably recognise your own organisation.

Chris Argyris and Donald Schön, Organizational Learning: A Theory of Action Perspective (1978). The foundational text on single-loop and double-loop learning. Academic in style but essential in substance.

Chris Argyris and Donald Schön, Organizational Learning II: Theory, Method, and Practice (1996). The mature statement. The treatment of defensive routines and Model II intervention is the most developed account available.

Chris Argyris, Knowledge for Action (1993). Where Argyris shows how to move from diagnosis to intervention. The practical companion to the theoretical work.

I write about the industry and its approach in general. None of the opinions or examples in my articles necessarily relate to present or past employers. I draw on conversations with many practitioners and all views are my own.