Dekker: The Safety of Change

Sidney Dekker’s safety science reveals why blame is the most expensive habit in organisational transformation.

A senior technology leader described a pattern to me recently. A cloud migration had gone badly. The team had missed a critical dependency, and a production system went down for six hours. Within a day, the incident review had identified the engineer who had approved the change. Within a week, the engineer had been moved off the programme. Within a month, every other team on the programme had quietly stopped making changes that carried any risk at all. The transformation stalled; not because the technology was wrong, but because the organisation’s response to failure had taught everyone the same lesson: if something goes wrong, someone will pay.

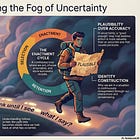

Sidney Dekker is a giant in safety science, best known for analysing plane crashes and medical errors. His work might seem distant from organisational transformation, but it is not. Dekker’s core insight is that the way an organisation responds to failure determines whether it can learn. Blame does not just punish the individual. It reshapes the information flow of the entire system, suppressing precisely the signals that transformation depends on. If Argyris diagnosed the defensive routines that prevent learning, Dekker explains what activates them. If Westrum described the cultures in which information flows freely, Dekker provides the mechanism that moves an organisation from one culture type to another. His work sits at the intersection of everything this series has been building: the question of whether an organisation can tell the truth about what is actually happening.

1. Work-as-Imagined vs Work-as-Done

In every organisation, there are two realities. There is work-as-imagined: the transformation roadmap, the new operating model, the process documentation designed in a room several floors removed from where the work happens. Then there is work-as-done: the messy, adaptive, improvisational reality of how people actually get things out the door.

Argyris named the same gap differently: espoused theory versus theory-in-use. The organisation says it values experimentation; its actual response to failed experiments tells a different story. Dekker makes the practical consequences vivid. When leaders confuse the map with the territory, when they assume that because a new process has been “rolled out” it is being followed, they respond to deviation by enforcing stricter compliance. More governance. More checkpoints. More reporting. But as Dekker demonstrates from decades of accident investigation: if people followed rules literally, without their usual adaptive workarounds, most systems would grind to a halt. The workarounds are not the problem. They are how the organisation actually functions. Suppressing them does not produce compliance. It produces concealment.

The gap between work-as-imagined and work-as-done is not a communication failure. It is an information failure. The organisation cannot learn because the information about how work actually happens cannot reach the people who need it. And it cannot reach them because the system punishes the messenger.

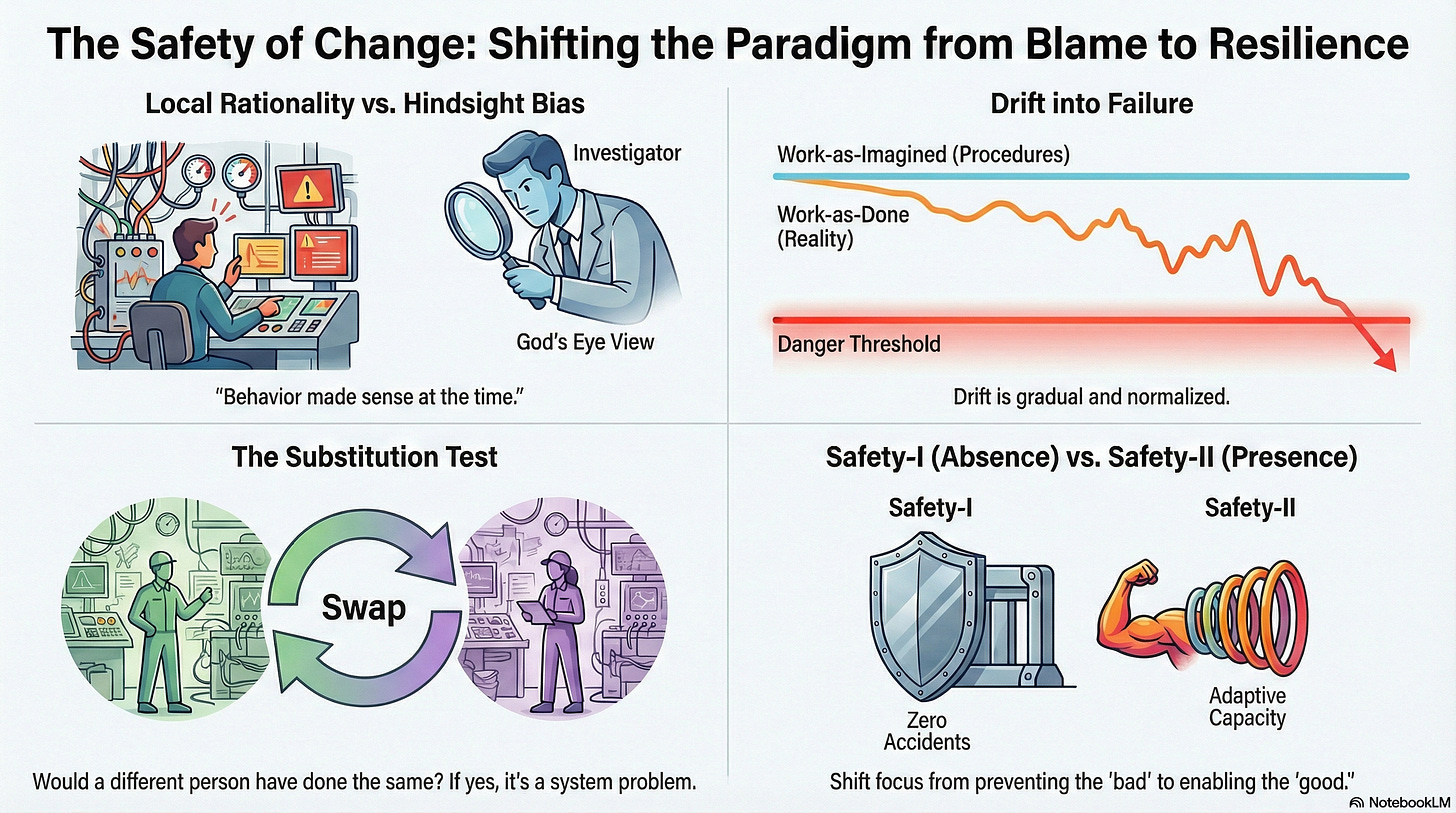

2. Local Rationality: Why “Resistance” Is Usually Adaptation

When a team fails to adopt a new tool or reverts to legacy behaviours, the standard response is to label them as “resistant to change.” Dekker calls this the Bad Apple Theory: the assumption that the system is fine and problems are caused by errant individuals.

The principle of local rationality inverts this entirely. People do not come to work to do a bad job. Their behaviour, even when it wrecks your timeline, made sense to them at the time, given their goals, their focus of attention, and the tools available. The team that bypassed the new AI-assisted workflow did so because the workflow did not account for something they consider critical. The developer who reverted to the old deployment process did so because the new one introduced a risk the process designers never saw.

Bourdieu would recognise this immediately. What looks like resistance is habitus in action: the accumulated professional dispositions, formed through years of practice, generating the only response the practitioner’s body knows how to produce. You cannot instruct someone out of their habitus. You can only create the conditions for new practice to form new dispositions. The leader who frames non-compliance as defiance has misdiagnosed an identity problem as a discipline problem, and the remedy they reach for (enforcement) will deepen exactly the concealment they should be trying to prevent.

3. The Substitution Test: Blame as Category Error

Dekker’s most practically useful tool is the substitution test. When something goes wrong, ask: if you replaced this person or team with another similarly trained group in the exact same situation, would they likely have done the same thing? If yes, you have a systemic issue, not a people problem.

This is not a soft concession. It is a diagnostic with teeth. Bateson would call blame a category error: it treats a systemic failure, which operates at the level of the organisation’s governing assumptions, as an individual error, which operates at the level of a single person’s behaviour. In Bateson’s terms, blame applies a Learning I correction (fix the person) to a Learning II problem (fix the frame). The substitution test forces the organisation to identify which level the problem actually sits at. Deming made the same point with characteristic bluntness: 94% of troubles belong to the system and are the responsibility of management. Only 6% are special causes attributable to individual workers.

Every time an organisation blames a person for a systemic failure, it learns nothing about the system and teaches everyone else to hide anything that might make them the next target. The substitution test is not about being kind. It is about being accurate.

4. Drift into Failure

Transformations rarely fail overnight. They suffer from what Dekker calls drift into failure: a gradual process where small, locally rational adaptations accumulate over time until the system crosses a boundary it did not know was there.

A team skips the daily stand-up because the meetings feel unproductive. Another team bypasses a security check because it slows deployment. A third modifies the specification template because the original was too rigid for their domain. Each adaptation is sensible in isolation. Together, they migrate the organisation far from its intended design, and nobody notices because the drift is incremental and each step felt like an improvement.

Giddens explains the mechanism: structure reproduces itself through daily practice. But it does not reproduce itself identically. Each small adaptation is a micro-change in the structure, and the accumulated micro-changes constitute a macro-drift that is invisible to anyone inside the system. The organisation’s formal description of itself (its processes, its governance, its roadmap) diverges steadily from its actual operation, and the divergence itself becomes undiscussable because acknowledging it would mean acknowledging that the plan is no longer being followed.

Drift is often accelerated by production pressure. If you cut budgets while demanding transformation, you are engineering drift. The teams under pressure will find workarounds, because their local rationality demands it, and the workarounds will accumulate until the gap between the plan and reality is too wide to bridge. The leader who is closest to the work will see the drift first. The leader who is furthest from it will see it last, if at all.

5. Safety-II: The Presence of Adaptive Capacity

Traditional management operates on what Dekker calls Safety-I: making sure as few things as possible go wrong. Success is defined as the absence of failure. Transformation governance consists of Red/Amber/Green status reports tracking defects, delays, and budget overruns.

Dekker proposes a fundamental inversion. Safety-II defines success as the presence of adaptive capacity: the ability of the organisation to handle surprises, variations, and conditions that the plan did not anticipate. Do not define the success of your transformation by the lack of complaints. Define it by the presence of people who are actively navigating complexity and finding ways to make the new model work.

This connects directly to what Stacey describes as skilled participation: the capacity to respond to what emerges rather than executing what was planned. An organisation with adaptive capacity is one where Weick’s sensemaking operates freely; where people can interpret ambiguous signals, improvise responses, and learn from what happens. An organisation fixated on Safety-I, on the absence of deviation, systematically destroys the adaptive capacity it most needs. The team that improvised a workaround to make the new system function is not failing. It is doing precisely the sensemaking that transformation requires. The question is whether the organisation can see that, or whether its governance framework has defined improvisation as non-compliance.

6. Just Culture: Where Information Flows

How you respond when something goes wrong determines whether your organisation can learn. Dekker distinguishes between retributive justice (who is to blame? how should they be punished?) and restorative justice (who has been affected? what needs to be repaired? how do we prevent recurrence?). The distinction is not philosophical. It determines the quality of information flow across the entire organisation.

Westrum’s typology makes this precise. In a pathological culture, failure produces blame, and blame produces silence. In a bureaucratic culture, failure produces process, and process produces delay. In a generative culture, failure produces inquiry, and inquiry produces learning. Dekker’s just culture is the mechanism that moves an organisation from pathological to generative. It does not do so by being lenient. It does so by being accurate: distinguishing systemic failures from individual negligence, and responding to each appropriately.

The cost of blame is not measured in morale surveys. It is measured in the information the organisation never receives. After blame, teams interact differently: more cautiously, with more concealment, with less of the social learning that Stacey describes as the medium through which transformation actually occurs. The weak signals that the organisation most needs; the early warnings of drift, the quiet innovations, the honest assessments of what is and is not working; are precisely the signals that blame suppresses. A just culture does not just protect individuals. It protects the information ecology that makes learning possible.

(An Organisational Prompt is something you can do now....)

Organisational Prompt

After the next incident, failure, or missed milestone in your transformation programme, observe what happens in the forty-eight hours that follow. Not the formal incident review. The informal signals. Who gets called into a meeting, and what do they look like when they come out? What do people say in the hallway that they did not say in the room? What language does leadership use: “Who is responsible?” or “What did we learn?”

These signals are the leading indicators of your information culture. If people are concealing, hedging, and managing impressions in the aftermath of failure, your organisation is in Westrum’s pathological or bureaucratic mode, and no amount of governance will produce the learning your transformation requires. If people are openly discussing what happened, proposing experiments to address it, and showing curiosity rather than anxiety, you have the beginnings of a generative culture. The signals after failure tell you more about your organisation’s capacity to transform than any status report ever will.

Further Reading

Sidney Dekker, The Field Guide to Understanding ‘Human Error’ (3rd edition, 2014). The most accessible entry point. The chapter on the “New View” of human error is the single best corrective to the blame instinct in organisational life.

Sidney Dekker, Drift into Failure (2011). How complex systems fail gradually through the accumulation of small, locally rational decisions. Read it alongside any transformation retrospective and the parallels will be uncomfortable.

Sidney Dekker, Just Culture: Restoring Trust and Accountability in Your Organization (3rd edition, 2016). The practical framework for moving from retributive to restorative responses to failure. The substitution test alone is worth the price.

Ron Westrum, A Typology of Organisational Cultures (BMJ Quality & Safety, 2004). The generative/bureaucratic/pathological typology that Dekker’s just culture operationalises. Freely accessible.

W. Edwards Deming, Out of the Crisis (1986). The statistical argument for why most variation is systemic, not individual. The Red Bead Experiment is the most vivid demonstration of what blame gets wrong.

I write about the industry and its approach in general. None of the opinions or examples in my articles necessarily relate to present or past employers. I draw on conversations with many practitioners and all views are my own.