Rumelt & Martin: Goals Are Not a Strategy

Rumelt and Martin Might Say That Your Organisation Has Goals, Not a Strategy

Most transformation programmes have a strategy document. It contains an aspiration (”become an AI-first organisation”), a set of goals (adoption targets, cost savings, headcount adjustments), and a list of initiatives (pilots, platforms, training programmes).

It is likely wrong; not in its ambition, but in its genre. It has goals where it needs a diagnosis. It has initiatives where it needs coherent action. It has aspiration where it needs choices. Richard Rumelt would call it bad strategy. Roger Martin would say the organisation is playing to play, not playing to win. They might both be right, and the distinction matters because the difference between bad strategy and good strategy is not quality of thinking. It is willingness to decide.

1. The Kernel: Diagnosis, Guiding Policy, Coherent Action

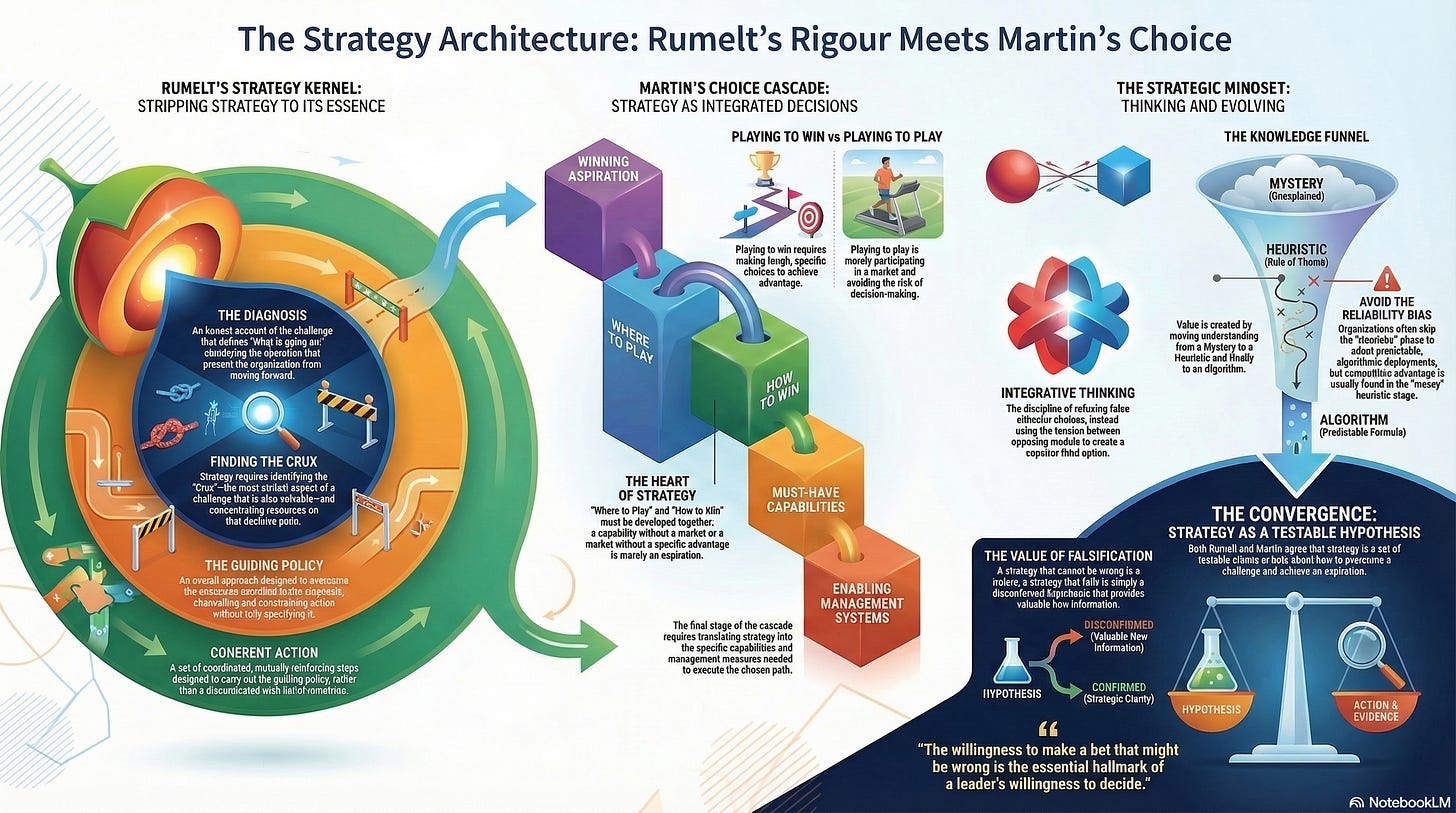

Rumelt’s contribution is to strip strategy to its essential structure.

A good strategy is a coherent response to a high-stakes challenge.

It consists of three inseparable elements: a diagnosis that defines what is going on, a guiding policy that establishes the overall approach, and coherent actions that carry out the policy. Remove any one and the strategy collapses.

The diagnosis is the foundation, and it is the element most consistently absent. Rumelt is blunt: “a great deal of strategy work is trying to figure out what is going on.” The diagnosis is not a statement of goals or desires. It is an honest account of the challenge, including the uncomfortable parts. The medical analogy is deliberate: a doctor who prescribes treatment without diagnosis is malpractising. An executive who, for instance, launches more AI initiatives without diagnosing what prevents the organisation from using AI effectively is doing the same.

The guiding policy channels and constrains action without fully specifying it. It creates advantage by concentrating effort on a pivotal aspect of the situation. The coherent actions are the punch: coordinated steps designed to carry out the policy. They must be mutually reinforcing, not a disconnected wish list. Rumelt observes that when executives complain about “execution problems,” it is usually because they confused setting goals with setting strategy. Bringing strategy down to action level flushes out the conflicts that aspirational language conceals.

For AI transformation, the kernel exposes the standard failure pattern. The fluff is “leveraging AI to drive innovation and competitive advantage.” The failure to face the challenge is the omission of what actually prevents adoption: specification capability, domain knowledge fragmentation, governance designed for a different era, cultural resistance rooted in legitimate fear. The goals masquerading as strategy are “deploy AI across 50% of business processes by 2026.” And the absence of coherent action is a portfolio of disconnected pilots that nobody has diagnosed as a system.

2. The Crux: Finding the Decisive Point

In his later work, Rumelt introduced the crux: the most critical aspect of a challenge that is also solvable. In rock climbing, the crux is the hardest section of the route; if you cannot get past it, you should not attempt that climb. In strategy, the crux forces the same discipline. Focus on the decisive point, not on everything at once.

The crux of most AI transformations is not the technology. It is the organisation’s inability to articulate what it wants with enough precision for AI to act on it. This is a specification problem, not a tooling problem. Focusing resources on AI platforms when the crux is specification capability is what Rumelt calls the chain-link error: you improve one link while the weakest link remains untouched, and the system’s performance remains bounded by what it cannot do, not by what it can.

The connection to Herbet Simon is direct. Simon’s proximate objectives, goals close enough to be feasible, are the strategic application of his idea of bounded rationality. Rumelt’s proximate objectives serve the same function: instead of “become AI-first,” set an objective achievable this quarter. “Three teams will have written specifications that generate working AI outputs without manual rework.” Each proximate objective creates momentum and learning that informs the next. This is the antithesis of the big-bang transformation programme, and it is the only approach that respects what Simon showed about how decisions actually get made in real organisations.

3. The Choice Cascade: Strategy as Five Integrated Decisions

Martin’s contribution is complementary. Where Rumelt begins with the challenge and works toward action, Martin begins with aspiration and works toward the systems that make it real. His Strategy Choice Cascade, developed with A.G. Lafley at Procter and Gamble, frames strategy as five integrated choices: a winning aspiration, where to play, how to win, must-have capabilities, and enabling management systems.

The heart is where to play and how to win, and they must be developed together. A where-to-play without a how-to-win is an aspiration. A how-to-win without a where-to-play is a capability in search of a market. Most AI strategies define where to play (”we will use AI in customer service, underwriting, and operations”) without ever specifying how to win (”our competitive advantage will be superior specification quality from deep domain expertise”). The where-to-play sounds strategic. Without a matched how-to-win, it is a shopping list.

Martin’s sharpest distinction is between playing to win and playing to play.

“When companies set out to participate in a market instead of winning, they will inevitably fail to make the tough choices that would make winning even a remote possibility.”

Playing to play means deploying AI broadly and hoping something sticks. Playing to win means choosing specific domains where your organisation’s domain knowledge gives it a specification advantage that competitors cannot match, and concentrating resources there. The first feels responsible. The second feels risky. Only the second is strategy.

The fourth and fifth boxes, capabilities and management systems, are where most organisations lose the plot. Without them, the strategy cannot be executed because it has not been translated into what the organisation must be able to do. If the how-to-win is “superior specifications from domain experts,” then the must-have capability is specification skill, which means (LEARNING CONDITIONS) training, practice, feedback loops, and a culture that values specification quality over AI output volume. The enabling management systems are the measures that tell you whether it is working.

4. Integrative Thinking: Refusing the False Choice

Martin’s second major contribution is integrative thinking: the discipline of refusing to accept unpleasant trade-offs as given. Most people, when faced with opposing options, simply choose one at the expense of the other. Martin’s research found that the most effective leaders use the tension between opposing models as raw material for creating a superior third option. Follett is a good mirror here.

The AI transformation is full of apparent either/or choices. Maintain governance or empower experimentation. Invest in tooling or invest in people. Centralise AI strategy or let teams diverge. Martin would say each dichotomy is false. The integrative response to “governance or experimentation” is to design governance that enables experimentation: guardrails that constrain the playing field without constraining the play within it. This is Bungay’s (from the military leaders article) directed opportunism applied to AI, and Ackoff’s dissolving applied to the governance problem.

The connection to Beer is structural. Beer’s 3-4 homeostat holds the tension between inside-and-now (System 3, optimisation) and outside-and-then (System 4, intelligence). Martin’s integrative thinking is the cognitive discipline that Beer’s architecture makes structurally possible. The viable system does not choose between exploit and explore. It maintains both, held in tension by an identity (System 5) that refuses the either/or.

5. The Knowledge Funnel: Mystery, Heuristic, Algorithm

Martin’s knowledge funnel describes how value is created through the progressive refinement of understanding. A mystery (something we cannot explain) is narrowed to a heuristic (a rule of thumb that guides action) and then codified into an algorithm (a fixed formula that produces predictable outcomes).

Most organisations are in the mystery phase of AI adoption: they do not yet understand what AI can reliably do in their specific context. The temptation is to skip to algorithm: buy a platform, deploy standard use cases, measure adoption percentages. This skipping produces what Martin calls the reliability bias: organisations adopt AI in the most predictable, measurable ways (chatbots, summarisation, code completion) while ignoring the harder mysteries (domain-specific reasoning, specification-driven generation, human-AI collaboration models that do not yet exist).

The heuristic phase is where the real value lies. Teams experimenting with AI in their specific domain, developing rules of thumb about what works, building tacit knowledge about specification quality. This is Mintzberg’s potter at the wheel, translated into the AI context. Organisations that skip the heuristic phase and jump to algorithmic deployment will get commodity AI applications that provide no competitive advantage. The heuristic phase is uncomfortable because it cannot be measured on a dashboard. It looks like mess. It is the mess from which strategy emerges.

6. Strategy as Hypothesis

Rumelt and Martin converge on a single insight that reframes how leaders should think about deciding. A strategy is not a plan. It is a hypothesis. Compare this to Stacey and Popper.

Rumelt insists that a good strategy is a testable claim about how to overcome a challenge. Martin argues that the five-box cascade is a set of bets: “we believe that if we play here and win this way, we will achieve our aspiration.” Both insist that a strategy that cannot be wrong is not a strategy. It is a truism.

This reframes failure. A strategy that does not produce the expected result is not a disgrace. It is a hypothesis disconfirmed, which is information. The willingness to make a bet that might be wrong is the price of strategic clarity. The unwillingness to bet is, in both Rumelt’s and Martin’s terms, the hallmark of bad strategy: the organisation has avoided choosing, and has therefore avoided deciding.

Popper is the philosophical ancestor here. A strategy that cannot be falsified is the organisational equivalent of a theory that cannot be tested. It is safe, it is comfortable, and it tells you nothing.

(An Organisational Prompt is something you can do now....)

Diagnose before you prescribe.

Take your current AI strategy and remove the aspirations, the goals, and the initiative list. What remains is the diagnosis: the honest account of what is preventing your organisation from using AI effectively. If nothing remains, you do not have a strategy. You have a wish list. Write the diagnosis. One page. What is actually going on? What is the crux, the single hardest obstacle that is also solvable? If you cannot name it, you are not ready to decide. If you can name it but the document does not mention it, the strategy has been written to avoid the truth rather than to confront it. Rumelt’s first hallmark of bad strategy is the failure to face the challenge. Face it. Everything else follows from that.

Further Reading

Richard Rumelt: Good Strategy/Bad Strategy - The essential starting point. The kernel framework, the four hallmarks of bad strategy, and the insistence that strategy begins with diagnosis. One of the most useful management books written this century.

Richard Rumelt: The Crux - Extends the kernel with the crux concept and the Strategy Foundry process for group strategy creation.

Roger Martin and A.G. Lafley: Playing to Win - The Strategy Choice Cascade. Practical, case-rich, and immediately applicable. The distinction between playing to win and playing to play is worth the book alone.

Roger Martin: The Design of Business - The knowledge funnel, the reliability-validity tension, and abductive reasoning. Essential for understanding why organisations systematically under-explore.

Roger Martin: The Opposable Mind - Integrative thinking. Why the best leaders refuse the either/or and how they generate superior options from opposing models.

I write about the industry and its approach in general. None of the opinions or examples in my articles necessarily relate to present or past employers. I draw on conversations with many practitioners and all views are my own.