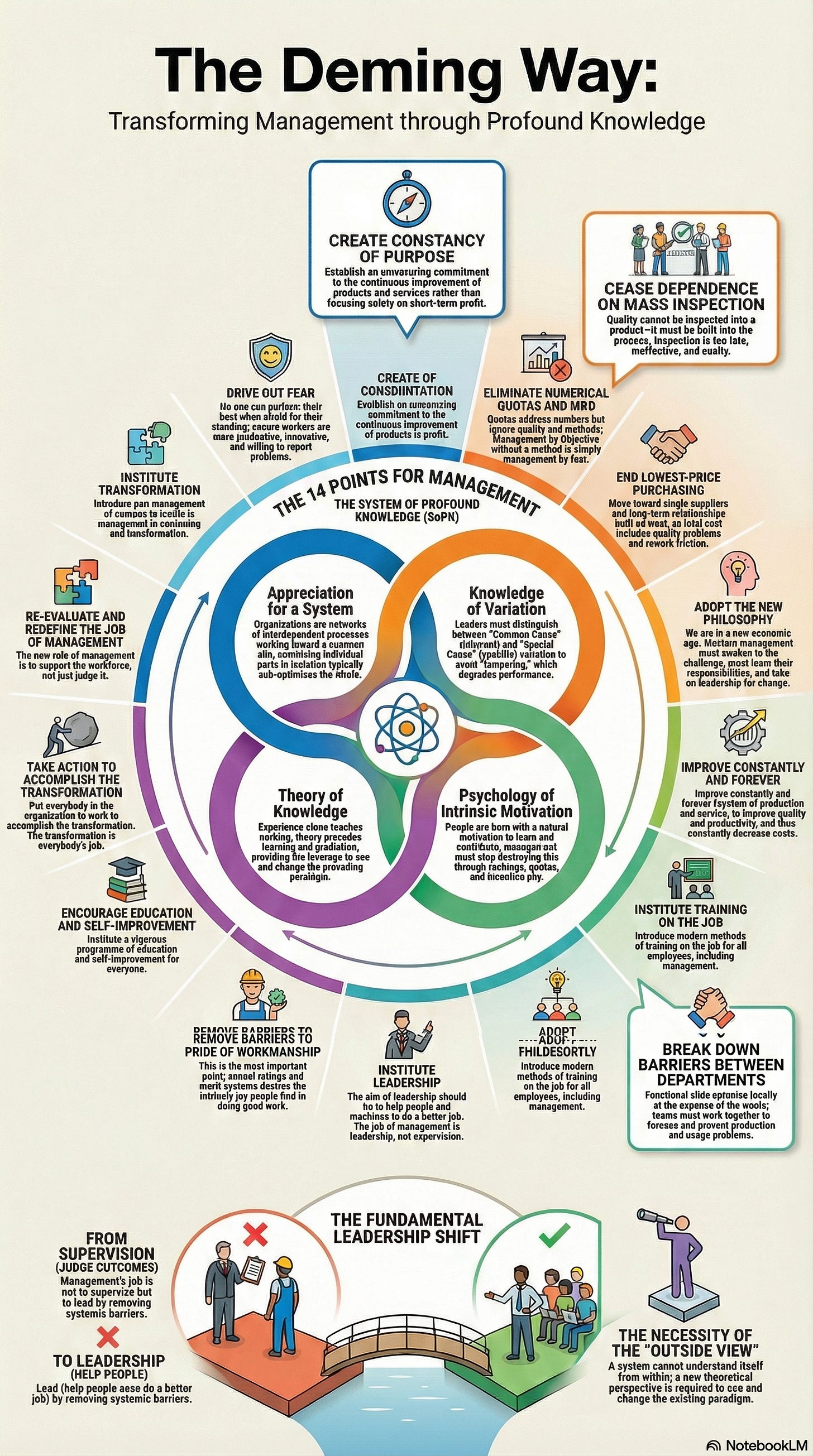

Deming: An Architect of Transformation

W. Edwards Deming’s management philosophy shows why most transformation failures belong to the system, not the worker.

A senior technology leader told me about a quarterly review that had gone badly. Two teams had missed their delivery targets. The executive response was swift: new reporting requirements, tighter governance, a restructured incentive scheme. Within six months, the teams were hitting their numbers. They were also hiding problems, gaming metrics, and quietly abandoning the exploratory work that had made them the organisation’s most innovative groups. The intervention had succeeded on its own terms and failed on every term that mattered. The system had been optimised for compliance. The capacity for learning had been destroyed.

W. Edwards Deming is often reduced to the “quality guru” who helped rebuild post-war Japan. This undersells him badly. Deming was a statistician, a systems thinker, and a philosopher of management whose central insight remains the most uncomfortable truth in organisational life: 94% of troubles belong to the system, and the system is the responsibility of management. Only 6% are attributable to special causes at the level of the individual worker. If your transformation is failing, Deming would not ask what is wrong with your people. He would ask what is wrong with your system, and then he would ask why you are looking anywhere other than the mirror.

1. Appreciation for a System: The Interaction Problem

Deming defined a system as a network of interdependent components working together to accomplish an aim. His warning was blunt: if you optimise the individual parts separately, you will sub-optimise the whole. The sales team incentivised to close deals that engineering cannot build, the operations function rewarded for cutting costs that the transformation requires, the AI centre of excellence measured on adoption metrics while the teams it serves are measured on delivery velocity; these are not coordination failures. They are design failures. The parts are performing exactly as the incentives instruct them to. The system is producing exactly what it was designed to produce.

Senge built his Fifth Discipline explicitly on Deming’s systems view, arguing that today’s problems come from yesterday’s solutions because leaders fail to see the feedback loops in the system. Stacey would push further: the system is not a machine that management can redesign from outside. It is a pattern of interaction that shifts only when the interactions themselves shift. But Deming provides something that Stacey’s framework does not: the statistical discipline to distinguish signal from noise before intervening. The leader who cannot tell the difference between a systemic pattern and a random fluctuation will intervene in ways that make the system worse. Deming called this tampering, and it is one of the most common and least recognised pathologies in organisational transformation.

2. Common Cause and Special Cause: The Category Error

Deming’s distinction between common cause variation (inherent in the system) and special cause variation (attributable to a specific, unusual event) is one of the most practically useful ideas in the series. Common cause variation is the natural output of the process as designed. Special cause variation is a signal that something outside the normal process has intervened.

The pathology Deming identified is tampering: reacting to common cause variation as though it were a special cause. A project is late; the leader reorganises the team. A quarter underperforms; the executive institutes new reporting requirements. A pilot fails; the sponsor replaces the lead. Each intervention assumes that the specific event was caused by a specific failing. But if the variation is common cause, if it is produced by the system itself, then the intervention addresses the wrong level. The reorganised team will produce the same variation because the system that produced it has not changed. Worse: the intervention introduces additional instability, increasing variation rather than reducing it.

Bateson would recognise this immediately as a category error: applying a Learning I correction (fix the specific instance) to a Learning II problem (the system’s governing logic produces the variation). Kahneman provides the cognitive mechanism: regression to the mean guarantees that an extreme result will be followed by a more average one, and the leader who intervened will attribute the improvement to their intervention rather than to the statistical inevitability. The result is a leader who becomes increasingly confident in tampering, because the numbers always seem to vindicate it. The Red Bead Experiment, Deming’s most vivid teaching device, demonstrates this with uncomfortable clarity: workers instructed to produce zero defects from a process that inherently produces defects learn nothing about the process but learn a great deal about the futility of effort within a system that cannot deliver what management demands.

3. Drive Out Fear: Where Information Dies

Perhaps the most important of Deming’s 14 Points for management is Point 8: drive out fear. His reasoning was precise: where there is fear, there are wrong figures. If people are afraid, they will hide defects, pad estimates, suppress problems, and tell leadership what leadership wants to hear. The information the organisation receives will be shaped by what is safe to say rather than by what is true. And a system operating on false information will produce false confidence; the most dangerous state an organisation can be in, because it feels like clarity.

This is the information pathology at the heart of the series. Argyris describes the same mechanism as defensive routines: people become skilled at preventing their own learning because honesty threatens their professional identity. Dekker operationalises Deming’s insight through the substitution test and just culture: if you replaced this person with another similarly trained individual in the same situation, would they have done the same thing? If yes, the problem is systemic, and blaming the individual teaches the system to conceal. Westrum’s typology makes the consequences measurable: in pathological cultures, messengers are shot; in bureaucratic cultures, messengers are tolerated but channelled; in generative cultures, messengers are trained and information flows to where it is needed.

Bourdieu deepens the diagnosis. Fear is not merely an emotional state that can be addressed through reassurance. It is inscribed in the habitus. Professionals who have spent years in organisations where visibility meant vulnerability have developed embodied dispositions that generate concealment automatically, below the threshold of conscious choice. Telling people it is safe to speak up does not change the dispositions formed by years of evidence that it is not. Deming understood this intuitively: he loathed annual performance reviews and management by objectives precisely because they institutionalise fear, creating structural incentives for the very concealment they claim to prevent. The review system rewards the appearance of performance. The habitus learns to produce appearances.

4. Theory of Knowledge: Why Experience Alone Teaches Nothing

Deming’s most epistemologically demanding claim is that experience by itself teaches nothing. You cannot just “do stuff” and hope to learn. Learning requires theory: an hypothesis about what will happen and why, tested against what actually happens, revised in light of the discrepancy. Without theory, experience is just accumulated anecdote.

Weick’s sensemaking framework illuminates why this matters. Sensemaking is retrospective; people understand what they have done after they have done it. But retrospective sensemaking without a prior hypothesis is vulnerable to narrative fallacy: the mind constructs a coherent story to explain what happened, and the story feels like understanding even when it is post-hoc rationalisation. Deming’s PDCA cycle (Plan-Do-Check-Act) is a discipline against this. The “Plan” phase forces a prediction. The “Check” phase compares the prediction against reality. The discrepancy between the two is where learning occurs. Without the prediction, there is no discrepancy, and without the discrepancy, there is no learning; only the comforting illusion that experience has made you wiser.

Bateson’s levels framework connects this to the broader series architecture. PDCA, properly applied, is a Learning II practice: it questions the theory, not just the execution. But most organisations practise PDCA as Learning I: they check whether the plan was followed, not whether the plan was right. The retrospective that asks “did we do what we said we would do?” is Learning I. The retrospective that asks “was what we said we would do the right thing to have done?” is Learning II. The difference between the two determines whether the organisation is improving its execution or improving its understanding.

5. Build Quality In: Practice Over Inspection

Deming argued that quality cannot be inspected into a product at the end; it must be built into the process from the beginning. Relying on downstream inspection, whether it takes the form of change approval boards, sign-off committees, or end-of-phase quality gates, is an admission that the process is incapable of producing quality by design. Inspection catches defects. It does not prevent them. And the organisational energy consumed by inspection is energy unavailable for the work that inspection was supposed to protect.

This is an argument about where learning occurs. Inspection locates learning at the end of the process, in the hands of people who did not do the work. Building quality in locates learning within the process, in the hands of the people doing the work. Bourdieu would call this the difference between discursive knowledge (the inspector’s checklist) and practical consciousness (the practitioner’s embodied understanding of what good looks like). The checklist can catch deviations from a standard. It cannot produce the craft that makes the standard unnecessary. The organisation that builds quality in is developing the habitus of its practitioners. The organisation that inspects quality at the end is developing the habitus of its bureaucracy.

6. The Leader as System Designer

If your transformation is stalling, Deming would not ask whether your people are resisting. He would ask whether you have designed a system in which they can succeed. The 94/6 split is not a metaphor. It is a statistical observation that the overwhelming majority of organisational performance is determined by the system, and the system is management’s responsibility.

This is the hardest lesson in the series, because it requires the leader to examine their own contribution to the problem. Heifetz calls this the adaptive challenge: the situation in which the people with the problem are the problem, and the leader is among those people. The instinct to blame the workforce, to reach for compliance tools, to tighten governance, to reorganise, to inspect more thoroughly; these are all System 1 responses that protect the leader’s identity while leaving the system unchanged. Deming’s demand is that the leader move to System 2, in Kahneman’s terms, and ask the uncomfortable question: what about the system I have designed is producing the results I am seeing? The answer is almost always more revealing, and more uncomfortable, than any amount of performance data about the people operating within it.

(An Organisational Prompt is something you can do now....)

Organisational Prompt

Choose a process in your transformation that relies on downstream inspection: a change approval board, a quality gate, a review committee, a sign-off stage. Ask the people who operate it and the people who experience it the same question: “What does this process actually produce?” Do not ask whether it is useful; ask what it produces. Then compare the two answers.

If the operators describe it as a safeguard and the people who experience it describe it as an obstacle, you have found a point where the system is producing compliance at the expense of capability. The gap between the two descriptions is a precise measure of how far the process has drifted from its original purpose. Deming would tell you that the process is not broken. It is doing exactly what it was designed to do. The design is the problem.

Further Reading

W. Edwards Deming, Out of the Crisis (1986). The complete statement of the System of Profound Knowledge and the 14 Points. The chapters on variation and the Red Bead Experiment alone are worth the price.

W. Edwards Deming, The New Economics for Industry, Government, Education (3rd edition, 2018). The more mature work, with deeper treatment of the theory of knowledge and the psychology of transformation. Less well known than Out of the Crisis but arguably more relevant to leaders.

The W. Edwards Deming Institute. The primary source for Deming’s work, including his 14 Points, the PDCA cycle, and the System of Profound Knowledge. Freely accessible.

John Willis, Deming’s Journey to Profound Knowledge (2023). The best modern account of Deming’s influence on software engineering and DevOps. Willis traces the direct line from Deming through Lean to the practices that now define high-performing technology organisations.

I write about the industry and its approach in general. None of the opinions or examples in my articles necessarily relate to present or past employers. I draw on conversations with many practitioners and all views are my own.