Dave Snowden, Cynefin, and the Table Napkin Test

Dave Snowden’s Cynefin framework reveals why the most consequential mistake in transformation is misclassifying the problem.

You cannot manage a complex system as if it were a complicated machine. That sentence sounds like a truism until you watch an enterprise spend eighteen months and several million pounds building a three-year AI transformation roadmap, complete with milestones, tollgates, and a governance framework so heavy it could anchor a ship. Six months in, the roadmap is fiction. The tollgates are rituals. And the people doing the work have quietly figured out their own way of using AI, none of which appears in the plan.

Dave Snowden, the creator of the Cynefin (kun-ev-in) framework, has spent decades explaining why this keeps happening. His central argument is not that planning is bad. It is that leaders consistently misclassify the nature of the challenge they face, and then apply the wrong response logic. They treat adaptive, emergent, deeply human problems as if they were engineering problems with discoverable solutions. And the confidence with which they do this is, itself, part of the problem. What follows is a brief overview of the ideas most directly applicable to transformation leadership. Snowden’s body of work extends well beyond what one article can cover; readers wanting the full picture should start with the Cynefin Company’s resources.

1. The Domains: Why the Wrong Response Logic Guarantees Failure

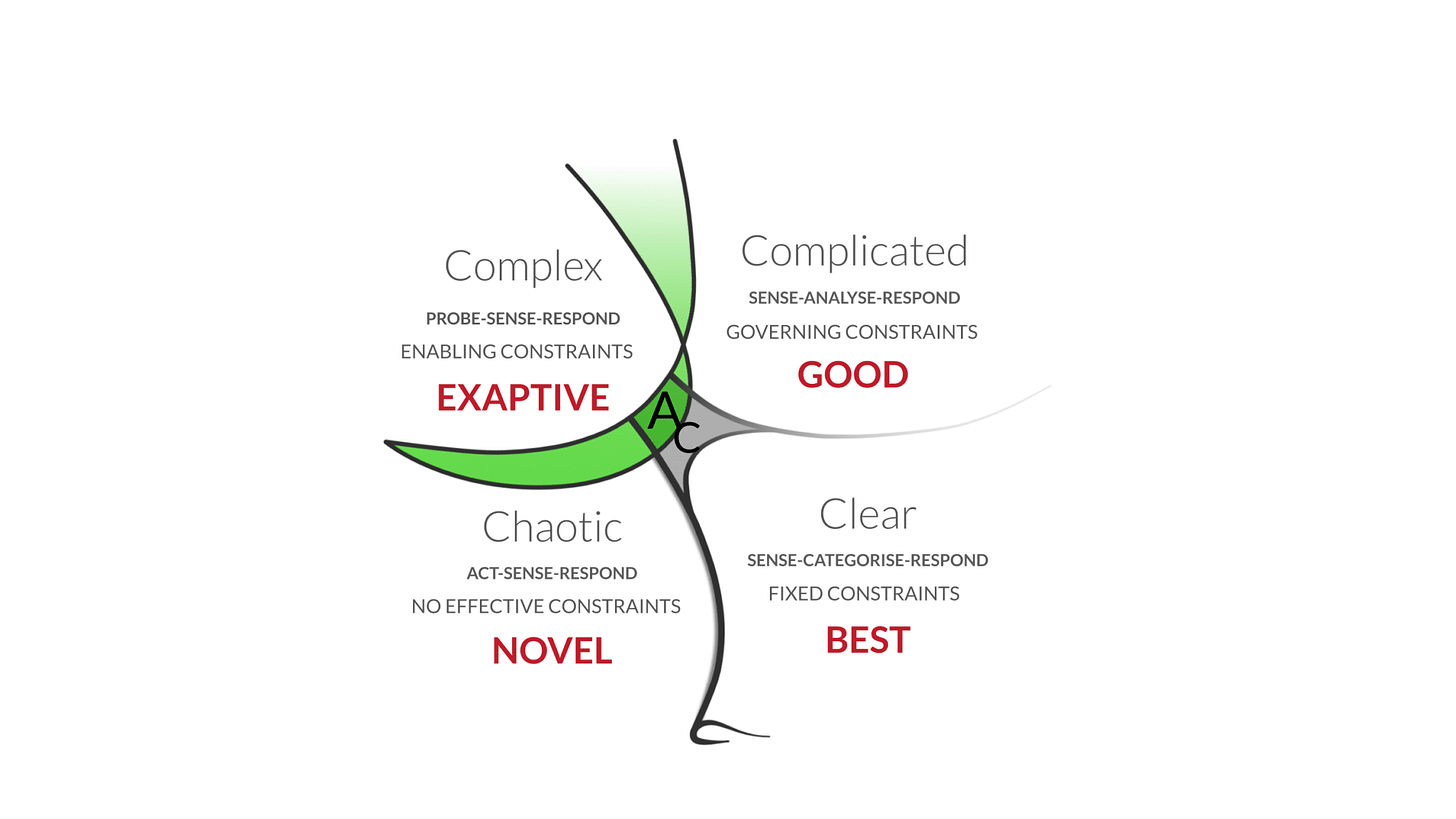

The most consequential mistake in transformation leadership is not choosing the wrong strategy. It is misclassifying the kind of problem you are dealing with, and then applying a response pattern that cannot work. Cynefin distinguishes between domains based on the relationship between cause and effect.

In the Complicated domain, cause and effect are discoverable through analysis. A jet engine fails; you call an expert, diagnose the fault, apply good practice. The knowledge exists; you need someone qualified to find it. The response logic is Sense-Analyse-Respond.

In the Complex domain, cause and effect are only coherent in hindsight. No amount of analysis can predict the outcome of an intervention, because the system changes as you interact with it. Cultural transformation, organisational learning, and innovation all live here. The response logic is fundamentally different: Probe-Sense-Respond.

Bateson’s framework illuminates why misclassification is so persistent and so damaging. Treating a complex challenge as a complicated one is a category error at the level of logical types: the leader is applying the wrong level of analysis to the situation, and the error produces not just the wrong answer but the wrong kind of answer. In Bateson’s terms, complicated problems require Learning I: correcting errors within an established frame. Complex problems require Learning II: questioning the frame itself. The leader who applies a complicated-domain response (analyse, plan, execute) to a complex-domain challenge is performing Learning I in a situation that demands Learning II, and the confidence they feel is the coherence illusion that Kahneman describes: System 1 has categorised the situation as familiar, because familiar is what System 1 does.

Heifetz names the same distinction differently: technical problems are amenable to expert solutions; adaptive challenges require changes in values, beliefs, and ways of working. Snowden provides the navigational instrument to identify which is which before the wrong response logic has been committed to.

2. Probe-Sense-Respond: Information Before Outcomes

In a complex domain, the Analyse-Plan-Execute cycle that most transformations rely on is structurally incapable of succeeding. Not because analysis is bad, but because the system cannot be understood in advance of interacting with it.

Snowden advocates for Probe-Sense-Respond: launch multiple, small, safe-to-fail experiments designed to generate information, not achieve predetermined outcomes. Observe how the system responds. Then amplify what works and dampen what does not.

The critical distinction is between probing and piloting. A pilot tests a predetermined solution in a controlled environment. A probe explores an uncertain space to discover what might work. Pilots ask “does this solution scale?” Probes ask “what happens when we try this?” If you are running pilots, you have already decided what the answer is. You are just checking whether the organisation can absorb it. That is a complicated-domain response to what may be a complex-domain problem.

This reframes what a transformation strategy should be: not a linear document with milestones and delivery dates, but a portfolio of coherent experiments, each designed to be survivable if it fails and informative whether it succeeds or not. The strategy emerges from the pattern of what works, which is precisely what Mintzberg means by emergent strategy and what Weick means by retrospective sensemaking. You cannot know what you think about AI adoption until you see what happens when people actually try it. Snowden provides the operational mechanism for how that emergence happens in practice. Stacey would add that the probes themselves are gestures: they call forth responses from the system, and those responses are the material from which the real strategy is constructed.

3. Constraints, Not Goals

In traditional change management, you set a specific goal (”Increase AI adoption by 40%”) and drive people toward it. Snowden argues that in complex systems, goal-setting produces perverse incentives, gaming, and Goodhart’s Law in full bloom: when the measure becomes the target, it ceases to be a good measure. Beer would recognise the pattern instantly: the purpose of the system is what it does, and what an adoption-target system does is produce adoption numbers, not changed practice.

Instead of managing outputs, you manage constraints within which behaviour emerges. Governing constraints are rigid rules that limit possibilities: “All AI-generated code must pass automated validation before deployment.” These reduce novelty but ensure safety. Enabling constraints create boundaries within which creative behaviour can emerge: “Teams must include a domain expert in every specification review, and they jointly determine the success criteria.” These encourage interaction and adaptation while maintaining coherence.

The design question shifts from “What should people do?” to “What constraints would make the desired behaviour more likely than the old behaviour?” You are not engineering an outcome. You are shaping a possibility space. This connects to Dekker’s Safety-II: studying what goes right, not just what goes wrong, because the same enabling constraints that guide successful outcomes also create the conditions for adaptation when things do not go as expected.

But Giddens would note that the constraints Snowden recommends are themselves structures that will be reproduced, reinterpreted, and potentially subverted by the agents who operate within them. An enabling constraint designed by leadership may be experienced as a governing constraint by the team, depending on how it is enacted in daily practice. The design of constraints is not the end of the work. It is the beginning of a structuration process that the designer does not fully control. Bourdieu adds the embodied dimension: the constraint interacts with the habitus of the people it constrains, and the habitus will generate responses that the constraint designer did not anticipate, because the designer has a different habitus from the people doing the work.

4. Retrospective Coherence: Why Best Practice Is Dangerous

The consultants arrive. They bring case studies and a great deal of PowerPoint. They tell you about the Spotify Model, how Google innovates, how Company X transformed. They present these as clear success stories with bold vision, disciplined execution, and predictable results.

Snowden calls this retrospective coherence: the human compulsion to look back at a successful outcome and connect the dots into a causal narrative, underplaying the hundreds of failed experiments, lucky accidents, and abandoned strategies that actually produced the result. This is Kahneman’s narrative fallacy and WYSIATI in operation. Our brains crave coherent stories, so we invent linear causality where none exists, package it as “best practice,” and sell it to organisations whose context is entirely different.

Best practice is legitimate in the Clear domain, where tasks are simple and repeatable. For anything in the Complicated or Complex domains, best practice is at best misleading and at worst dangerous. Copying another company’s AI adoption model, their governance framework, their team structure, their toolchain, is dangerous precisely because the conditions that made those things work are invisible in the success narrative. The rituals can be reproduced. The context cannot. Bourdieu would say: what gets reproduced is the visible structure, not the habitus that made it meaningful. You import the form without the practical knowledge that gave it life. The result is cargo-cult transformation: all the ceremonies, none of the understanding.

5. Distributed Sensing: Where the Weak Signals Live

Executives are often the last to know the truth. Information is filtered and sanitised as it moves up the hierarchy. By the time a signal reaches a quarterly review, it has been translated through three layers of management, stripped of context, and fitted into whatever narrative the current strategy requires.

This is the information pathology that runs through the entire series. Argyris describes how defensive routines filter information before it reaches leadership. Westrum classifies the cultures that determine whether messengers are shot, tolerated, or trained. Bateson explains why the filtering produces not just incomplete information but the wrong kind of information: the hierarchical translation changes the logical type of the signal, turning a complex, contextual observation into a simple, acontextual metric. The metric may be accurate. The meaning has been destroyed.

Snowden advocates for distributed sensing: using the entire workforce to provide real-time narrative data on what is actually happening. The people who know whether AI is actually changing how work gets done are the people doing the work. The developers using or not using AI coding assistants. The domain experts writing or struggling with specifications. The team leads watching the dynamics shift or not. These stories contain the weak signals of success and failure that no adoption metric can capture.

You still need metrics; you have a corporate communication infrastructure to feed. But do not confuse output metrics with the means by which you actually guide your initiative. Dekker’s concept of drift into failure is the warning: systems drift through small, incremental, locally rational steps. By the time a dashboard metric turns red, the drift is often irreversible. Narrative sensing catches the drift early, when it can still be addressed.

6. The Framework and Its Limits

Snowden provides something rare: a framework that is both intellectually rigorous and immediately diagnostic. But its tensions should be visible.

Stacey would push back on the framework itself: the act of classifying a situation into a domain risks creating the very false certainty that Snowden warns against. If complexity means that cause and effect are only visible in retrospect, who decides, in real time, that a situation is complex rather than complicated? The classifier is inside the system they are classifying. Snowden acknowledges this; the framework has evolved to include dynamics of movement between domains. But the tension remains, and it is the same tension that runs through Senge: the diagnostic tool is powerful, but the diagnostician is not outside the system being diagnosed.

Peters would add that frameworks, however elegant, do not move people. Energy moves people. Snowden’s work gives you the right categories, the right response logics, the right diagnostic questions. What it does not always provide is the emotional charge that makes people care enough to act on the diagnosis. The analytical clarity of Cynefin is necessary. It is not sufficient. The leader who uses it must bring their own conviction, their own willingness to tolerate not knowing, and their own capacity to act within uncertainty without pretending it is certainty.

(An Organisational Prompt is something you can do now....)

Organisational Prompt

I borrow this one from Dave himself.

Take a napkin, or the back of an envelope, and draw how feedback from the people doing the work actually reaches the people making decisions in your organisation. Not the org chart. Not the governance framework. The actual path: who talks to whom, what gets filtered, what gets lost, what arrives too late.

Then ask: what could you do, this week, to shorten one of those paths by one step? The napkin will tell you more about your organisation’s capacity to learn than any strategy document. If the path is long, information is old by the time it arrives. If it passes through layers that translate and sanitise, meaning is destroyed in transit. If it stops at a gatekeeper who decides what is “relevant” to leadership, the gatekeeper’s mental model is governing the organisation’s information flow, and that mental model has never been examined.

Further Reading

Dave Snowden and the Cynefin Company, thecynefin.co. The official home of Snowden’s work, including the evolving Cynefin framework, resources on anthro-complexity, and SenseMaker.

Cynthia Kurtz and Dave Snowden, The New Dynamics of Strategy: Sense-making in a Complex and Complicated World (IBM Systems Journal, Vol. 42, No. 3, 2003). The foundational paper on Cynefin and its application to strategic decision-making. Freely available.

Dave Snowden and Mary Boone, A Leader’s Framework for Decision Making (Harvard Business Review, 2007). The Cynefin framework applied to leadership. The clearest short introduction.

Alicia Juarrero, Context Changes Everything: How Constraints Create Coherence (2023). The philosophical foundation for constraint-based management. Juarrero’s work on enabling constraints underpins much of Snowden’s thinking on how to design possibility spaces rather than prescribe outcomes.

I write about the industry and its approach in general. None of the opinions or examples in my articles necessarily relate to present or past employers. I draw on conversations with many practitioners and all views are my own.